A simple way to manage log messages from containers: CloudWatch Logs

Gone are the days when administrators logged into their machines to access log files. Containers and virtual machines are launched and terminated dynamically to scale based on demand, to deploy new versions, or to recover from failure nowadays. Collecting, monitoring and analyzing log messages in a centralized data storage has become a minimum requirement for production-ready systems.

You will learn how to use CloudWatch Logs to manage log messages from thousands of containers in the following.

Simple Example

Go through the following steps to send your first log message from your container to CloudWatch Logs.

- Open CloudWatch Logs in the Management Console.

- Create a log group name

docker-logs. - Go to IAM and create a role for the use with EC2 named

docker-logsand attach theCloudWatchLogsFullAccesspolicy. Note: do not use theCloudWatchLogsFullAccesspolicy for production workloads. Restrict access to the specific resource and actions instead. - Launch an EC2 Instance based on the

Amazon Linux AMI 2017.03.*, select the IAM role ‘docker-logs’, and attach a security group allowing SSH access. - Log into the EC2 Instance via SSH.

yum install dockerto install Docker.service docker startto start Docker.- Start a container with

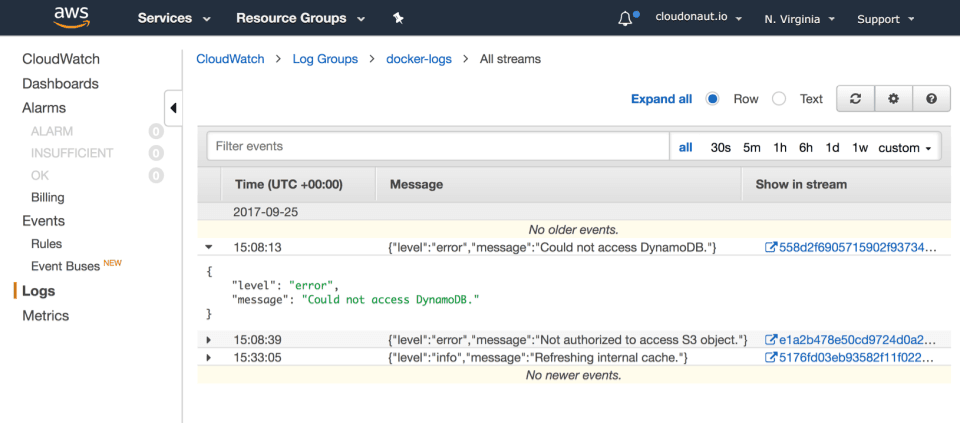

docker run --log-driver=awslogs --log-opt awslogs-group=docker-logs alpine echo 'a cloudonaut.io example' - Open your CloudWatch Logs group to find your log message as illustrated in the following screenshot.

How did your container send a log message to CloudWatch Logs? Read on.

What is CloudWatch Logs?

CloudWatch Logs is a managed service offered by AWS providing scalable, easy-to-use, and highly available log management. I do like to use CloudWatch Logs to collect, monitor, and analyze your log messages because of its simplicity. AWS covers the basics of log management. Don’t expect a full-blown solution like Elasticsearch/Kibana, Sumo Logic or Splunk.

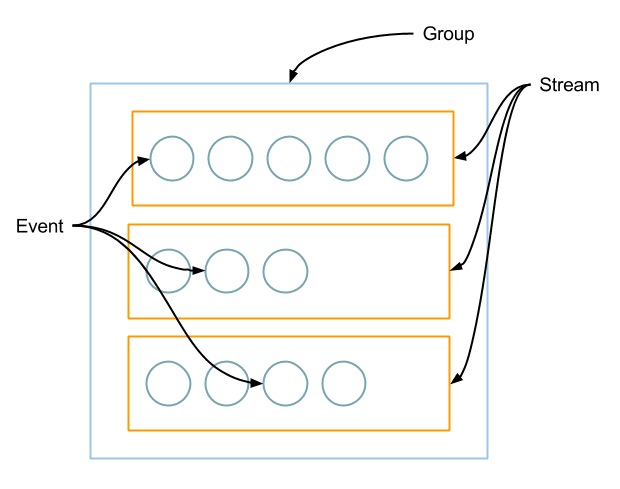

The following figure shows the main elements of CloudWatch Logs:

- Group a bucket for your log messages, comparable with an S3 bucket.

- Stream processes up to 5 MB per second, use multiple streams to scale log data ingestion.

- Event contains the log message.

Additionally, you can use metric filters to monitor incoming log messages. CloudWatch logs are priced per amount of ingested data, stored data and transferred data. See CloudWatch Pricing for details.

CloudWatch Logs offers a REST API to ingest data. But how to transfer a log message from your container to CloudWatch Logs?

Docker logging drivers

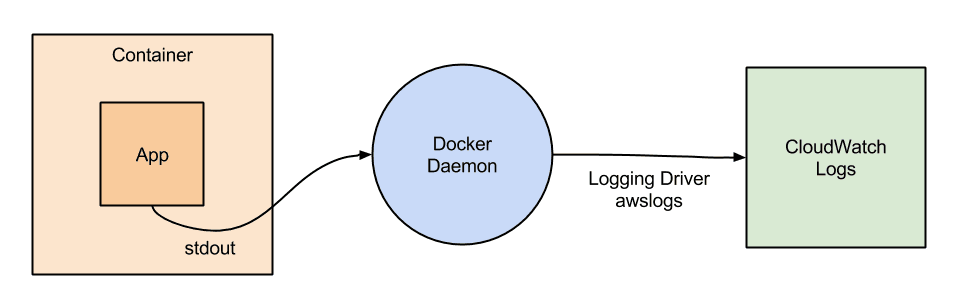

Docker can forward log messages from stdout and stderr to different targets. You can use the following built-in logdrivers: none, json-file, syslog, journald, gelf, fluentd, awslogs, splunk, etwlogs, and gcplogs. See Supported logging drivers for details.

Use the awslogs logging driver to send logs from your container to CloudWatch Logs without the need of installing any additional log agents. As shown in the following figure the container writes log messages to stdout and stderr. The Docker Daemon receives the log messages and uses the logging driver awslogs to forward all log messages to CloudWatch Logs.

Back to the example from above. Let’s have a deeper look at the command you used to start your container and send a log message to CloudWatch Logs.

docker run \ # start a container |

That’s all you need to send log messages from a single container to CloudWatch Logs. But how to send log messages from hundreds of containers to CloudWatch Logs? Learn how to integrate CloudWatch Logs with ECS (EC2 Container Service).

ECS Example

ECS allows you to run container workloads on a fleet of EC2 instances. The following example is an excerpt from our collection of Free Templates for AWS CloudFormation.

To launch a container in your ECS cluster, you need to create a task definition. The task definition contains the configuration for your container. Part of the task definition is the LogConfiguration configuring the logging driver of your container.

# [...] |

Summary

When looking for an easy way to manage your container logs on AWS, CloudWatch Logs is a good choice. Docker comes with a built-in logging driver for CloudWatch Logs: awslogs. No need to install and run any additional log collecting agents. CloudWatch Logs scales automatically so you can use it for a single container or thousands of containers running on ECS.