AWS Velocity Series: Containerized ECS based app infrastructure

EC2 Container Service (ECS) is a highly scalable, fast, container management service that makes it easy to run, stop, and manage Docker containers on a cluster of Amazon EC2 instances. To run an application on ECS, you need the following components:

- Docker image

- ECS cluster: EC2 instances running Docker and the ECS agent

- ECS service: Managed Docker containers on the ECS cluster

ECS provides the ability to run a production-ready application on EC2 with reduced responsibilities and increased deployment speed compared to the EC2 based approach. By production-ready, I mean:

- Highly available: no single point of failure

- Scalable: increase or decrease the number of instances/containers based on load

- Frictionless deployment: deliver new versions of your application automatically without downtime

- Secure: patching operating systems and libraries frequently, follow the least privilege principle in all areas

- Operations: provide tools like logging, monitoring and alerting to recognize and debug problems

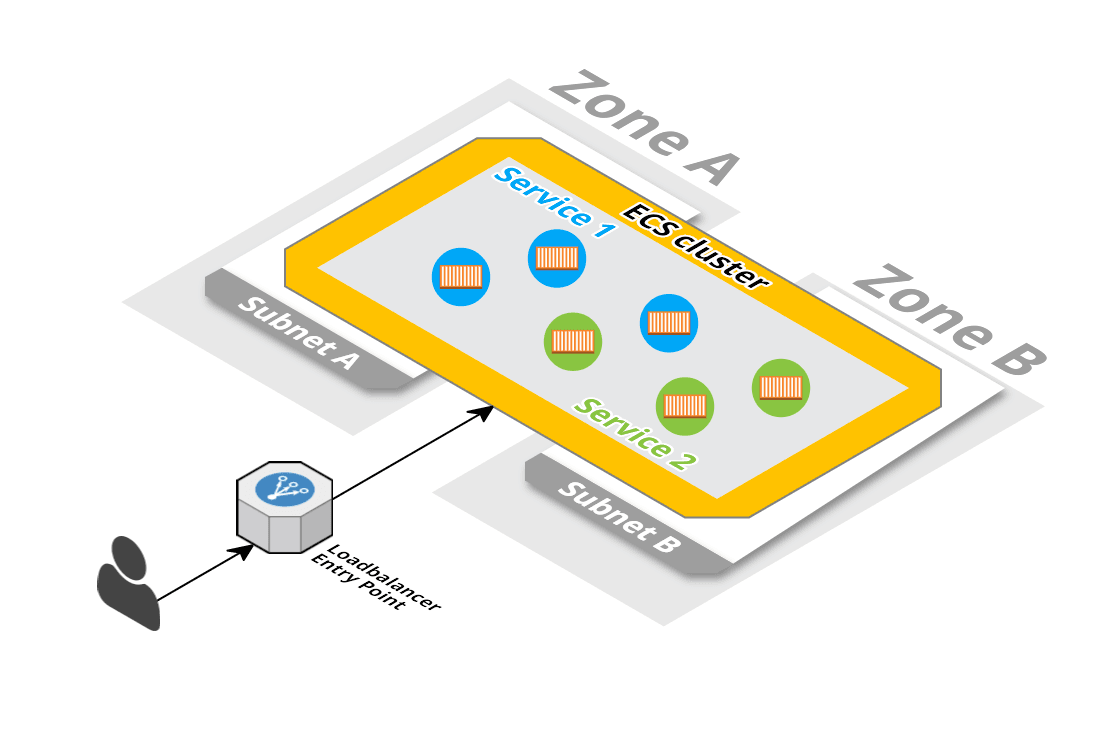

The overall architecture will consist of a load balancer, forwarding requests to containers running on multiple EC2 instances, distributed among different availability zones (data centers).

The diagram was created with Cloudcraft - Visualize your cloud architecture like a pro.

AWS Velocity Series

Most of our clients use AWS to reduce time-to-market following an agile approach. But AWS is only one part of the solution. In this article series, I show you how we help our clients to improve velocity: the time from idea to production. Discover all posts!

Let’s start with the needed infrastructure. The ECS cluster and the service.

ECS cluster

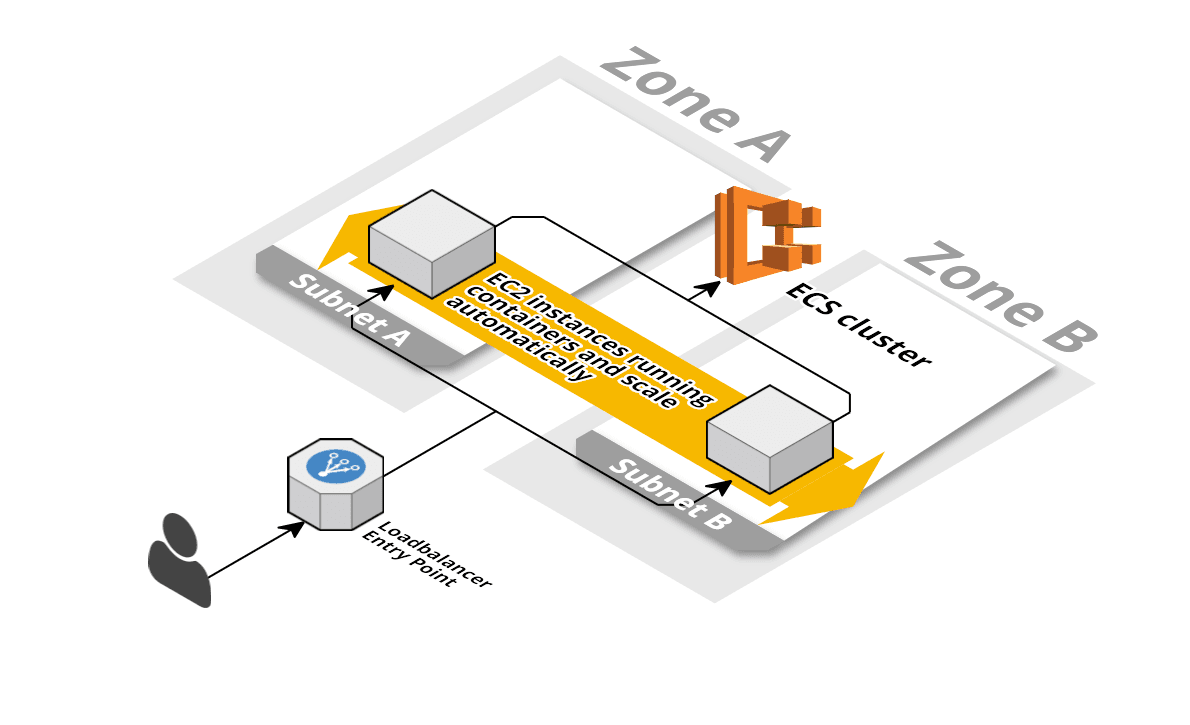

The ECS cluster is a fleet of EC2 instances with the ECS agent and Docker installed. The ECS cluster is responsible for scheduling the work (containers) to the EC2 instances.

The diagram was created with Cloudcraft - Visualize your cloud architecture like a pro.

Your Docker containers will run on those EC2 instances. You don’t need to care about the ECS cluster too much. We provide you a free and production-ready CloudFormation template. Please setup the ECS cluster now if you want to setup the scenario. AWS charges will likely occur! The ECS cluster need to run in a VPC, so if you don’t have a VPC stack based of our Free Templates for AWS CloudFormation (https://github.com/widdix/aws-cf-templates/tree/master/vpc) create a VPC stack first.

This first step was easy. Now you learn to use the ECS cluster with ECS services.

ECS service

The ECS service is responsible for launching Docker containers in the cluster. The service also makes sure that failed containers are replaced, and it also takes care about performing rolling updates for you. You also need a load balancer to route traffic to the containers. The following ECS service template is based on free and production-ready CloudFormation template.

Load balancer

You can follow step by step or get the full source code here: https://github.com/widdix/aws-velocity

Create a file infrastructure/ecs.yml. The first part of the file contains the load balancer. To fully describe an Application Load Balancer, you need:

- A Security Group that allows traffic on port 80

- The lApplication Load Balancer itself

- A Target Group, which is a group of EC2 instances. Each of them runs containers that can receive traffic from the load balancer

- A Listener, which wires the load balancer together with the target group and defines the listening port

Watch out for comments with more detailed information in the code.

|

But how do you get notified if something goes wrong? Let’s add a parameter to the Parameters section to make the receiver configurable:

AdminEmail: |

Alerts are triggered by a CloudWatch Alarm which can send an alert to an SNS topic. You can subscribe to this topic via an email address to receive the alerts. Let’s create an SNS topic and two alarms in the Resources section:

# A SNS topic is used to send alerts via Email to the value of the AdminEmail parameter |

Let’s recap what you implemented: A load balancer with a firewall rule that allows traffic on port 80. In the case of 5XX status codes, you will receive an Email. But the load balancer alone is not enough. Now it’s time to add the ECS service.

ECS service

I already talked about the ECS service. It will take care of your containers. To be more precise, it will take care of your tasks that run in the ECS cluster. One task can contain one or multiple Docker containers. Three resources are needed:

- A

Task Definitionthat describes the Docker containers (similar to a Docker Compose file, or a Kubernetes Deployment) - An

IAM Rolefor your container, so you don’t need to pass in static credentials when you want to interact with AWS from within your container. If you don’t want to make AWS API calls from your container the role is not needed. - The

ECS Serviceitself.

To make things parameterizable, you also need to add a few parameters to the Parameters section:

# Where does this Docker image comes from? It will be created in the pipeline! |

Now you can describe the resources in the CloudFormation template.

TaskDefinition: |

Let’s recap what you implemented: A Task definition to define the containers that are managed by the service, an IAM role that is accessible inside the containers, and the ECS service that used the task definition to launch tasks (a bunch of containers) in the cluster. Logs from the containers are already shipped to CLoudWatch Logs by the awslogs log driver and are visible in the Log Group that is part of the ECS cluster template.

Two things are missing:

- Scalability of containers

- Alerting if containers have issues

Let’s tackle those issues step by step.

Auto scaling of containers

If you auto scale the number of containers, your ECS cluster must be able to auto scale as well. if you use our free template on GitHub, the cluster will auto scale as well

Auto Scaling works similar compared to the EC2 based approach. To scale based on the load you need to add:

- Scaling Policies to define what should happen if the system should scale up/down

- CloudWatch Alarms to trigger a Scaling Policy based on a metric such as CPU utilization

- Additionally, you need a so-called

Scalable Target. TheScalable Targetcan be an ECS service, but it could also be a Spot Fleet or an EMR Instance Group.

Again, you have to add those resources to the Resources section of your template:

ScalableTargetRole: # based on http://docs.aws.amazon.com/AmazonECS/latest/developerguide/autoscale_IAM_role.html |

The number of tasks is now increased if the CPU utilization of the service goes above 70%, while the number of tasks is decreased if the CPU utilization falls below 30%.

Monitoring

Last but not least, you have to add CloudWatch Alarms to get alerted if something is wrong with your service. In the Resources section:

# Sends an alert if the average CPU load of the past 5 minutes is higher than 85% |

The infrastructure is ready now. Read the next part of the series to learn how to setup the CI/CD pipeline to deploy the ECS based app.

Series

- Set the assembly line up

- Local development environment

- CI/CD Pipeline as Code

- Running your application

- EC2 based app

a. Infrastructure

b. CI/CD Pipeline - Containerized ECS based app

a. Infrastructure (you are here)

b. CI/CD Pipeline - Serverless app

- Summary

You can find the source code on GitHub.