Review: API Gateway HTTP APIs - Cheaper and Faster REST APIs?

An API gateway acts as an API front-end that receives API requests from clients and forwards them to back-end services. Typically, an API gateway offers the following features:

- Throttling

- Billing

- Authentication and authorization

- Request validation

- Request and response transformation

AWS offers different types of API gateways as a managed service. This review takes a closer look at the new service API Gateway HTTP APIs announced in December 2019 and generally since available in March 2020. The cloud provider promises that HTTP APIs are faster and cheaper than it’s predecessor. We will look at hard technical facts instead of flowery marketing promises.

A summary of Amazon’s API gateways to avoid confusion:

- API Gateway REST APIs is the full-feature flagship service to build REST APIs announced in 2015.

- API Gateway HTTP APIs is the fast and straightforward alternative to build REST APIs announced in 2019.

- API Gateway WebSocket APIs was announced in 2018 and allows you to build a real-time API using WebSockets.

- Application Load Balancer (ALB) is a layer-7 load balancer with similarities with an API gateway.

This review focuses on HTTP APIs.

Do you prefer listening to a podcast episode over reading a blog post? Here you go!

How It Works

An HTTP API allows you to specify a REST API. For example, by specifying your REST API in the OpenAPI 3.0 specification. After defining resources and methods, you are ready to deploy your HTTP API, which will result in a publicly available HTTPS endpoint.

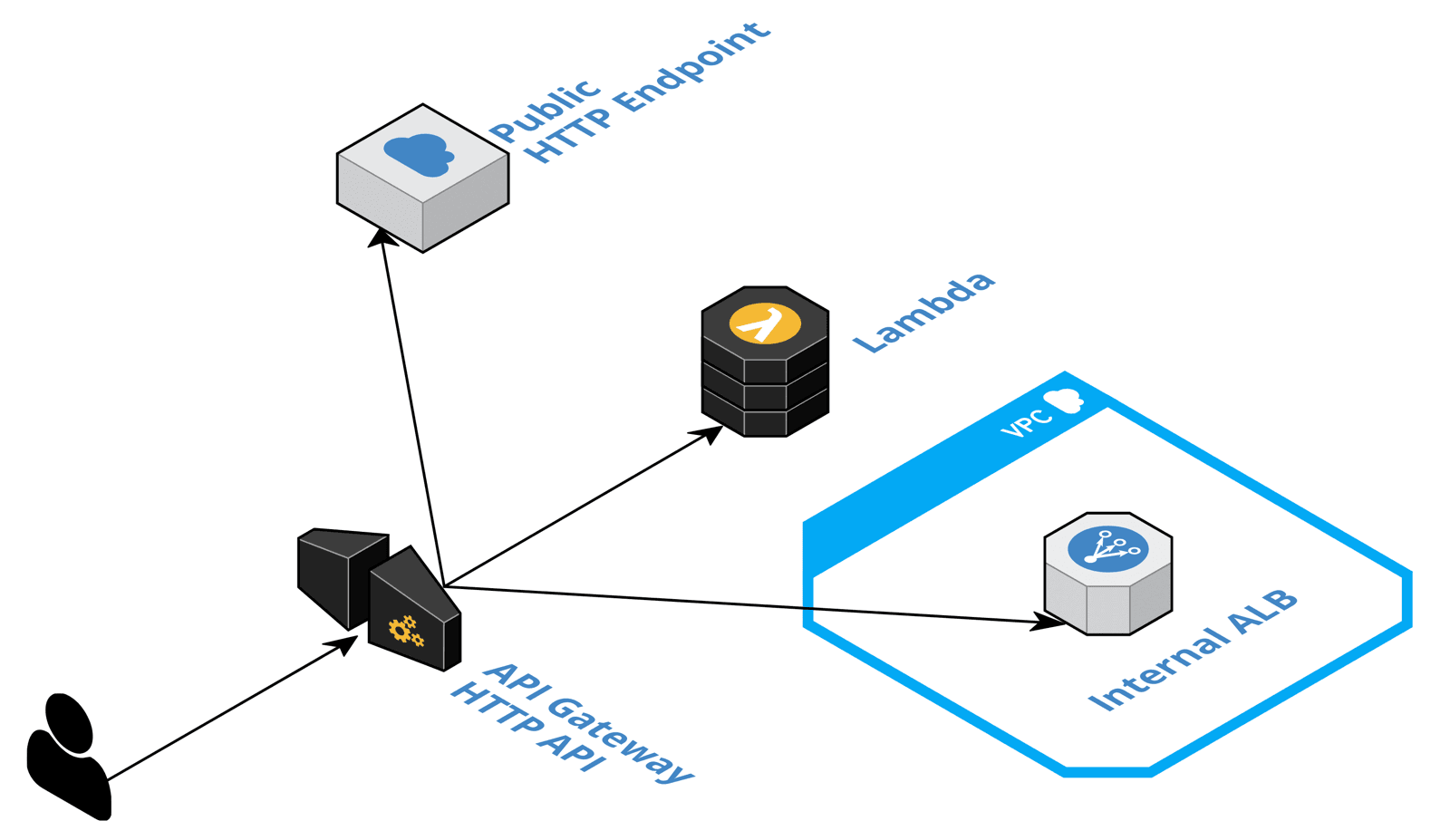

An HTTP API forwards incoming requests to the following back-end endpoints:

- AWS Lambda function

- Any publicly availably HTTP endpoint

- Internal ALB or Cloud Map.

On top of that, HTTP APIs come with the following features:

- Authentication based on JWT tokens (OpenID Connect or OAuth 2.0)

- Cross-origin resource sharing (CORS)

- Stages to deploy different versions of a REST API in parallel (e.g., a test environment)

- Custom domain names

Next, we look at whether AWS can deliver on the promise of lower cost and lower latency than a REST API.

Pricing

In general, it is correct that HTTP APIs are cheaper than REST APIs.

| HTTP API | REST API | Savings | |

|---|---|---|---|

| Requests (1 Million ) | $1.00 | $3.50 | 70% |

| Outgoin Data Transfer (per GB) | $0.09 | $0.09 | 0% |

A cost reduction by 70% is an essential argument for HTTP APIs.

But there’s a catch to it: HTTP APIs meters a request in 512 KB increments. REST APIs don’t. Therefore, when you have to transfer more than 1536 KB per request and response, an HTTP API will be more expensive than a REST API.

Latency

To verify that HTTP APIs do reduce latency compared to REST APIs, I set up the following test infrastructure. An HTTP API with a Lambda integration, a REST API with a Lambda integration, a Lambda function returning a static response, an EC2 instance m5.large running k6 to run a simple load test.

| HTTP API | REST API | Savings | |

|---|---|---|---|

| Average (ms) | 14.13 | 16.83 | 16% |

| 90th Percentile (ms) | 15.97 | 18.58 | 14% |

| 95th Percentile (ms) | 18.63 | 23.05 | 19% |

Reducing latency by 2.5 ms is excellent. However, it will most likely not reduce the overall latency by 16%, as in my example. Because most likely, your Serverless application will spend the most time waiting for a database or calculating results. So I don’t think that latency is the deciding argument for HTTP APIs.

REST APIs come with a built-in cache. Caching for REST APIs is charged by provisioned memory size and hour with prices starting from $14 for 0.5 GB up to $2,736 for 237.0 GB per month. HTTP APIs do not provide a built-in caching layer. However, you could use CloudFront to cache requests. CloudFront does not come with a base fee, instead you pay per request and outgoing data transfer. Of course, you could use CloudFront in front of your REST API with disabled caching as well.

Developer Experience

Configuring a REST API is tricky with HTTP APIs AWS focused on simplifying the developer experience.

The good news, it is quite simple to create an HTTP API that forwards all requests to a single Lambda function, or any other supported endpoint. The feature is called Quick Create. The following code snippet shows how to create an HTTP API forwarding all requests to a Lambda function with CloudFormation. The same functionality is available with Terraform as well.

Resources: |

Besides that, importing and exporting OpenAPI 3.0 is possible as well. AWS defined some extensions to the OpenAPI specification x-amazon-*. Those extensions are available for HTTP APIs as well for REST APIs.

The following snippet shows an OpenAPI specification for an API forwarding requests to a Lambda function.

openapi: "3.0.1" |

I’m not a big fan of mixing infrastructure code into the API specification. It comes with all kinds of compatibility issues. I prefer the way AWS AppSync separates the API specification (GraphQL) and the AWS resource configuration.

Overall, the experience for developers building simple APIs improved with HTTP APIs.

Missing Features

AWS itself does not offer any API without rate limiting. Except the ones where we have to pay per request. It is, therefore, somewhat surprising that HTTP APIs do not provide user/account-based throttling. Not being able to throttle requests from your customers will sooner or later result in an outage due to a bottleneck in your infrastructure or an enormous AWS bill. There is always that customer that sends you an extreme amount of requests, whether knowingly or by accident. In my opinion, an API without the ability to throttle neither per user/account nor per client is not production-ready.

Another option, to throttle or deny requests would be to use AWS WAF (Web Application Firewall). However, HTTP APIs do not support AWS WAF yet.

Getting insights into the health and performance of your API is crucial. Besides analyzing logs, it is beneficial to use a monitoring tool for distributed applications. AWS X-Ray is an option here. X-Ray does integrate nicely with REST APIs but not with HTTP APIs, unfortunately.

HTTP API vs. API Gateway vs. ALB

AWS announced HTTP APIs in December 2019 and promised to close the feature gap between HTTP APIs and REST APIs in the future. However, I cannot say that there has been much progress in the last six months.

The following table compares the available features between HTTP APIs (API Gateway), REST APIs (API Gateway), and ALB (Application Load Balancer).

| Criteria | HTTP APIs | REST APIs | ALB |

|---|---|---|---|

| Optimized for Global Traffic | ❌ | ✅ | ❌ |

| Public Endpoint | ✅ | ✅ | ✅ |

| Private Endpoint | ❌ | ✅ | ✅ |

| Lambda Integration | ✅ | ✅ | ✅ |

| Public HTTP Integration | ✅ | ✅ | ❌ |

| Private HTTP Integration | ✅ | ✅ | ✅ |

| AWS Service Integration | ❌ | ✅ | ❌ |

| OpenID Connect Authentication | ✅ | ✅ | ✅ |

| OAuth 2.0 Authentication | ✅ | ❌ | ❌ |

| SAML Authentication | ❌ | ✅ | ✅ |

| Social Authentication (Google, ...) | ❌ | ✅ | ✅ |

| Custom Authentication | ❌ | ✅ | ❌ |

| User/Account-level Throttling | ❌ | ✅ | ❌ |

| WAF | ❌ | ✅ | ✅ |

| Request Validation | ❌ | ✅ | ❌ |

In summary, it is fair to say that REST APIs offer the broadest set of features and integrations.

Limitations

One of the most important pages within the documentation of an AWS is Quotas. That’s where AWS lists the soft limits (might be increased by AWS depending on your scenario) and hard limits (cannot be increased).

Some critical hard limits for HTTP APIs are:

- Integration timeout: 30 seconds

- Payload size: 10 MB

- Request line and header values: 10240 bytes

The same limitations apply to REST APIs as well.

Check out Amazon API Gateway quotas and important notes to learn more.

Service Maturity Table

The following table summarizes the maturity of the service:

| Criteria | Support | Score |

|---|---|---|

| Feature Completeness | ⚠️ | 2 |

| Documentation Detailedness | ⚠️ | 2 |

| Tags (Grouping + Billing) | ✅ | 10 |

| CloudFormation + Terraform support | ✅ | 10 |

| IAM granularity | ✅ | 10 |

| Integrated with AWS Config | ✅ | 10 |

| Auditing via AWS CloudTrail | ✅ | 10 |

| Available in all commercial regions | ✅ | 10 |

| SLA | ✅ | 10 |

| Total Maturity Score (0-10) | ✅ | 7.1 |

Our maturity score for API Gateway HTTP APIs is 7.1 on a scale from 0 to 10.

Summary

HTTP APIs are becoming the new standard for API Gateways on AWS. Lower costs and lower latency are essential arguments to trade in the feature-complete REST API with a shiny new HTTP API. However, you should keep in mind that HTTP APIs are missing some critical features. To me, user/account-level throttling is the most crucial feature that is still missing and a show stopper for most scenarios.

Did I miss something? Do you disagree with my review? Message me!

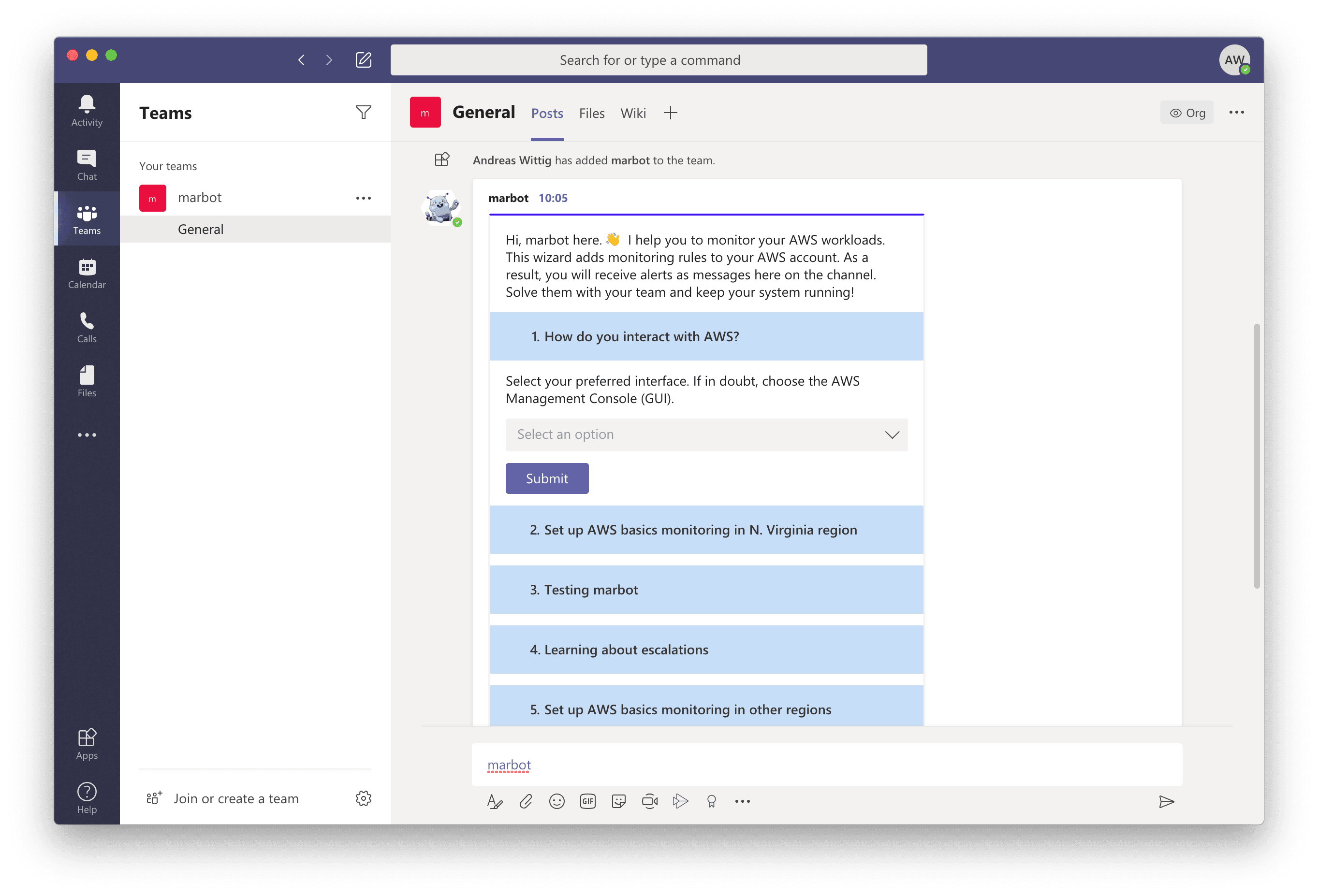

P.S. marbot available for Microsoft Teams

We are happy to announce, that marbot, our chatbot monitoring your AWS infrastructure is now available not only for Slack but for Microsoft Teams as well. marbot sets up CloudWatch Alarms and EventBridge Rules for all parts of your AWS infrastructure. CloudFormation and Terraform are supported. Your team receives alerts as direct messages or channel messages via Slack or Microsoft Teams. The alert includes relevant details to understand and solve the problem.