Show your SaaS architecture: Time Series Guru

Today I want to show you the architecture of my latest AWS project: Software-as-a-Service time series database with REST API. TimeSeries.Guru is a TSDB build to handle large volumes of time series data. The Saas is powered by the high-performance database kdb+ from Kx Systems that sets the standard for time-series analytics. kdb+ makes use of a proprietary array processing language called Q which is pretty hard to learn. At TimeSeries.Guru we give the power of kdb+ to our customers who have no time to learn the Q programming language but want to benefit from an outstanding piece of technology created for financial institutions.

High level overview

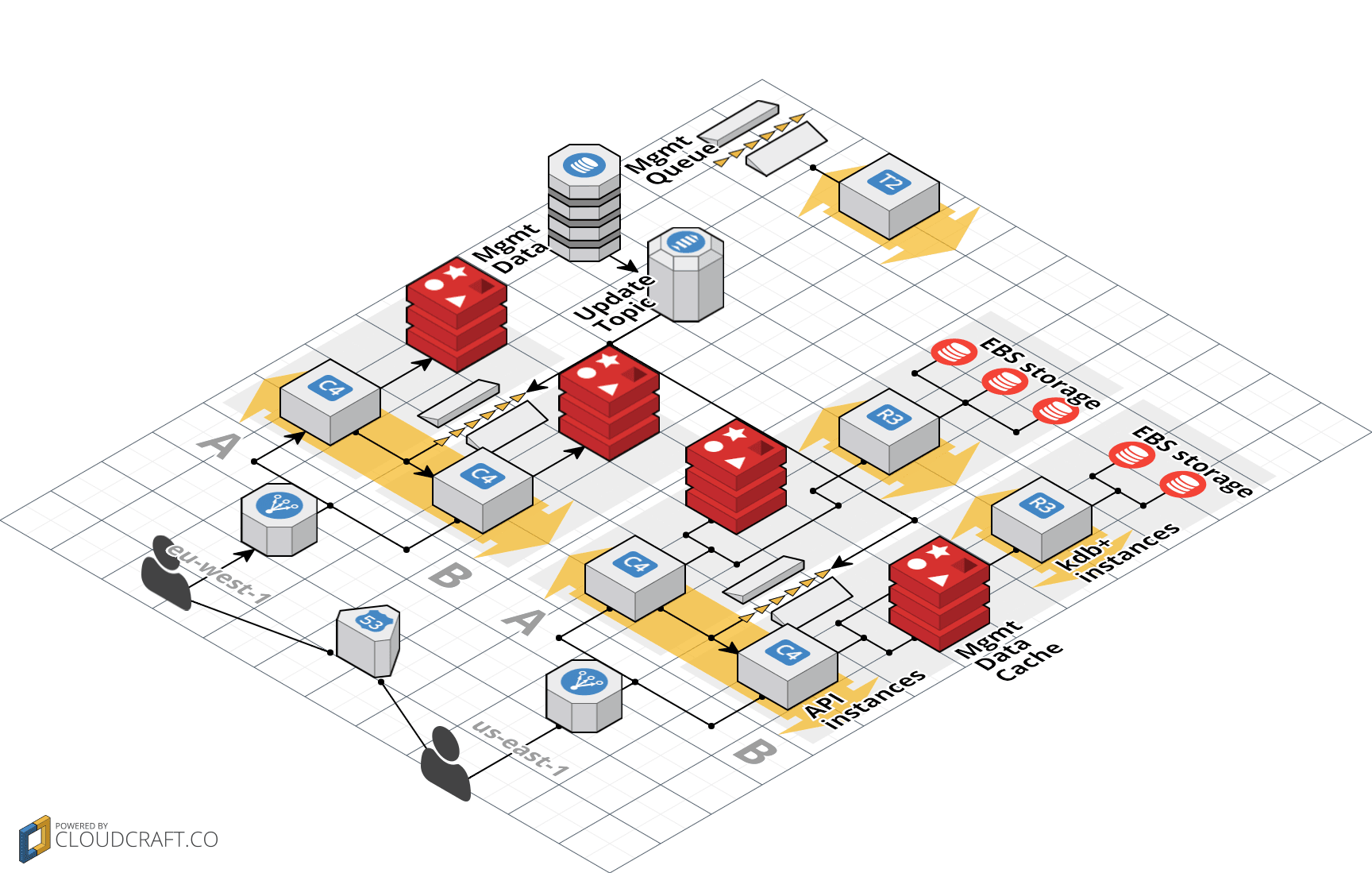

If a customer wants to insert data into a database this needs to be done by calling our REST API. Depending on the AWS region the customer selected for the database a different API endpoint like eu-west-1-api.timeseries.guru or us-west-1-api.timeseries.guru must be used. The DNS entry points to an Elastic Load Balancer (ELB) that redirects the requests to one of the backend API machines hosted on Elastic Compute Cloud (EC2). The API backend is responsible for authentication, input validation, (de)serialization and calling the kdb+ database instances. Therefore the API backend needs to access data like API tokens, databases, time series schema, … To reduce latency the management data that is primarily stored in DynamoDB is also cached in ElastiCache Redis Nodes in each region + Availability Zone. Management data is replicated using a SNS topic which distributes messages to SQS queues in every region. The kdb+ database instances are hosted on EC2 and use Elastic Block Store (EBS) network attached block storage to persist data. To increase performance we strip the data to multiple volumes from where kdb+ can read in parallel to saturate the 10Gbit network. Finally a management SQS queue keeps track of tasks like provisioning a new database. In the background all AWS resources are managed with CloudFormation. The following diagram gives you an overview.

The diagram was created with Cloudcraft - Visualize your cloud architecture like a pro.

Components

As I mentioned in the high level overview TimeSeries.Guru is divided into four components.

REST API backend

The REST API backend is implemented in Node.js. restify is the lightweight framework of choice to implement a REST API in Node.js. To communicate with the kdb+ database instances we use our own open-source npm package node-q which implements the q ipc protocol. The REST API backend performance is observed with New Relic and we also write metrics with our own collectd plugin directly into a TimeSeries.Guru database as well. Logs are shipped over to Loggly. The REST API backend automatically scales horizontally to optimize our resource utilization.

Management data

We use DynamoDB to store information about our customers like users, databases, time series, and other entities. To postpone the problem of replicating DynamoDB across AWS regions (which is now solved with DynamoDB streams) we have only one source of truth in Ireland. This is not a big problem because most of the database requests are reads which we answer by a Redis Caching layer that we have running in every Availability Zone in every region. The reason for this is to keep network latency to a minimum by not crossing Availability Zones.

kdb+ database instances

The kdb+ database instances are running a few q processes to insert and query data. All data is stored on EBS volumes. Depending on various factors we strip your time series data to multiple volumes from which we can read in parallel to saturate the 10Gbit network. Luckily the operating system caches disk access to avoid going to the disks at all if the same data is needed more often. It all depends on the memory of your database instance (up to 244 GB at the moment).

Management queue

The Management queue keeps track of tasks like creating a database, importing data, backing up data and stuff like this. A dynamic fleet of EC2 instances picks up the management tasks as required. There is not too much magic going on here. The workers are also implemented in Node.js.

Summary

The TimeSeries.Guru architecture was developed with simplicity in mind. We wanted to measure performance before we start to optimize the wrong things. We also wanted to create an elastic and automated system. With the help of Auto Scaling Groups and CloudFormation we achieved both of our goals.

Further reading

- Article 3 simple ways of saving up to 90% of EC2 costs

- Article What can you do with AWS?

- Article Building blocks for highly available systems

- Article Serverless image resizing at any scale

- Tag ec2

- Tag elb

- Tag ebs