Containers on AWS: ECS, EKS, and Fargate

The container landscape in general and on AWS in particular is changing quickly. AWS releases new services and features to deploy containers constantly. Currently, the most interesting options are: Elastic Container Service (ECS) and Elastic Kubernetes Service (EKS). On top of that, there are two options to provide the compute resources for your containers: virtual machines (EC2) or Fargate. When thinking about how to deploy your container workload on AWS, you should evaluate these four options. Read on to learn about these options and their differences.

This is a cross-post from the Cloudcraft blog.

What is ECS?

ECS (Elastic Container Service) is a fault-tolerant and scalable container management service. An ECS cluster manages compute resources and orchestrates the container lifecycle: allocate CPU and memory, fetch container image, start container, monitor container, stop container, clean up.

Another important aspect of ECS: automating deployments. ECS supports rolling updates and blue-green deployments. Besides that, ECS monitors the health of your applications and replaces failed containers automatically.

A big benefit of ECS is the tight integration into the AWS ecosystem. For example, the ECS API works very similarly to any other AWS APIs which provide frictionless authentication and authorization through AWS Identity and Access Management (IAM).

ECS is free of charge, however, it is a proprietary solution. That’s the most important difference to the other container management service: EKS.

What is EKS?

Kubernetes (K8s) is an open-source system for automating deployment, scaling, and management of containerized applications. On a high level, ECS and Kubernetes are solving the same problems. My first contact with K8s was in 2017. Back then, I learned from a vivid example that it is no child’s play to make K8s ready for production.

Luckily, AWS came along with Elastic Kubernetes Service (EKS) in 2018. EKS offers fully-managed Kubernetes as a service. That’s a game changer, as you can focus on deploying your containers instead of investing a lot of energy in operating a distributed system.

Unlike ECS, Kubernetes cannot be used only on AWS. Every major cloud provider comes with a managed Kubernetes service as well. Furthermore, you could deploy K8s on-premises and even on your local machine.

There’s a catch, though: an EKS cluster costs $72 per month. Quite a lot, compared to $0 per month for an ECS cluster. It is also disappointing that EKS does not support the latest K8s versions.

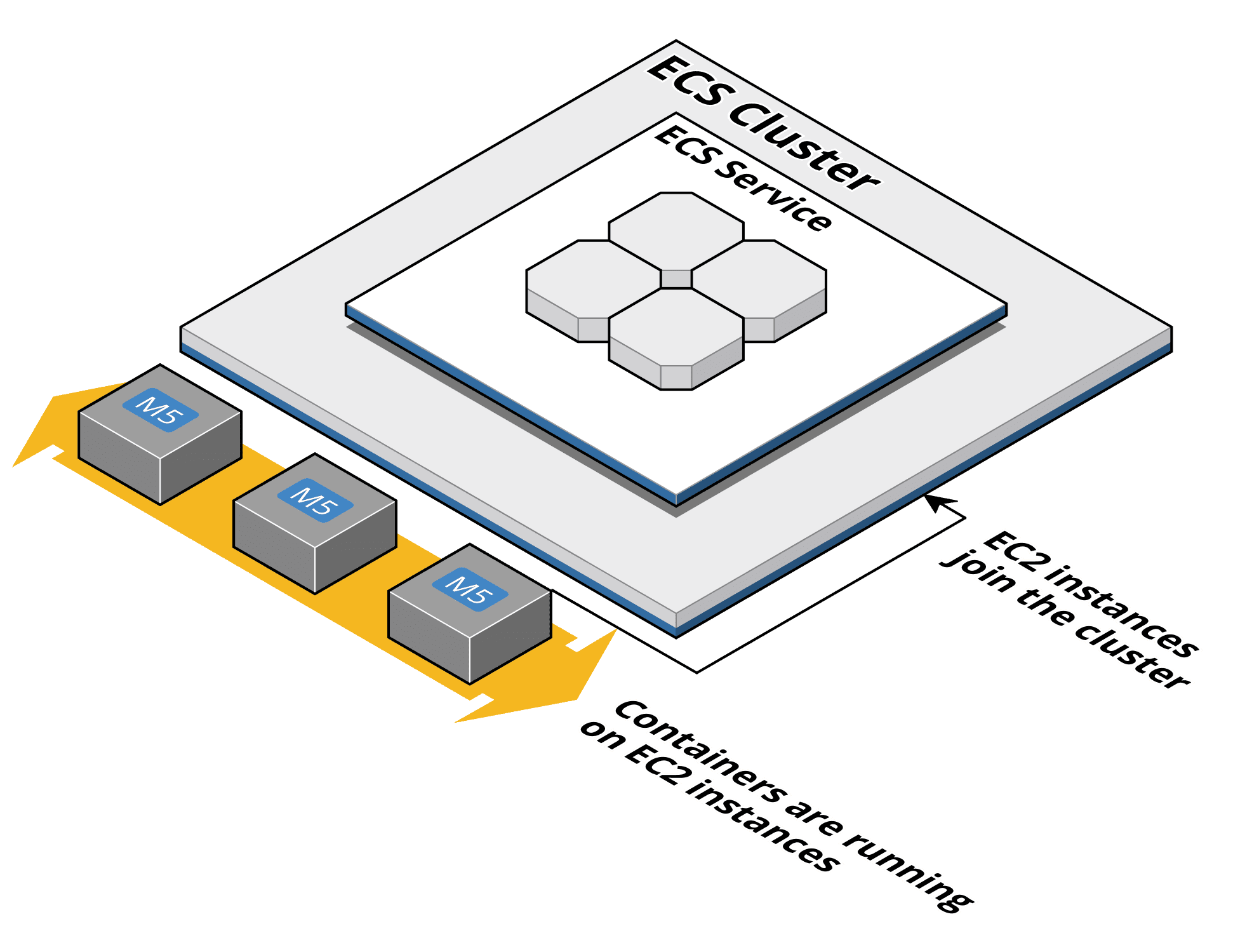

What is Cluster Auto Scaling/Managed Node Group?

The hard truth about containers: you are adding an additional layer to your infrastructure. Instead of managing virtual machines only, you have to manage virtual machines and containers. That adds extra complexity. For example, you need to scale both layers: the containers as well as the underlying virtual machines.

Fortunately, two features reduce the complexity of managing virtual machines for your container clusters:

- ECS Cluster Auto Scaling manages a pool of EC2 instances forming an ECS cluster. A capacity provider interacts with an Auto Scaling Group to increase or decrease the number of EC2 instances on-demand.

- The EKS Managed Node Group launches and terminates EC2 instances on-demand based on an Amazon Machine Image (AMI) provided by AWS.

Keep in mind that the EC2 instances are still running in your AWS account. Which means you are responsible for those virtual machines. AWS is just offering a tool to make that job a little bit easier.

What is Fargate?

AWS Fargate is a game changer for container workloads on AWS. Fargate provides a fully-managed compute engine for ECS and EKS. No need to manage virtual machines - as described above - any more. Instead AWS provides compute capacity for your containers on-demand. That’s decreasing complexity dramatically. I might even argue that operating both layers containers and virtual machines yourself is not economically viable in most cases.

Fargate allows you to allocate 0.25 to 4 vCPUs and 0.5 GB to 30.0 GB memory.

Keep in mind that Fargate comes with some limitations. A few examples:

- Running containers in

privilegedmode is not supported. - It is not possible to configure custom DNS servers when launching a container.

- Making use of an IPC namespace to allow containers to communicate directly through shared-memory is not supported.

Most likely, those limitations will not hinder you from launching your containers on Fargate. But you might want to check the AWS documentation whether one of the limitations affects your scenario.

Cluster and Compute Costs

As mentioned above there is a huge difference between the costs for cluster management. On the one hand, ECS is free of charge. On the other hand, each EKS cluster costs $72 per month. That’s a huge difference. Especially, as AWS recommends to use separate clusters to isolate workloads from each other.

“However, because Kubernetes is a single-tenant orchestrator, Fargate cannot guarantee pod-level security isolation. You should run sensitive workloads or untrusted workloads that need complete security isolation using separate Amazon EKS clusters.” 1

So you will end up with more than one cluster. For example, to isolate your test environment from your production environment.

Let’s have a look at compute costs next. First of all, there is no big difference between ECS and EKS when it comes to compute costs. The underlying EC2 instances are priced the same no matter if you are using them with ECS or EKS.

However, there is a significant difference when comparing EC2 with Fargate. Be aware that the surcharge differs significantly depending on the allocated CPU and memory resources.

| Category | Type | vCPU | Memory | Monthly Costs |

|---|---|---|---|---|

| XS | EC2 Instance t3.nano | 2 | 0.5 GiB | $3.80 |

| Fargate | 0.25 | 0.5 GiB | $8.88 | |

| L | EC2 Instance m5.large | 2 | 8.0 GiB | $70.08 |

| Fargate | 2 | 8.0 GB | $83.89 | |

| XXL | EC2 Instance r5.xlarge | 4 | 32.0 GiB | $183.96 |

| Fargate | 4 | 30.0 GB | $212.59 |

I’d like to mention that comparing EC2 costs with Fargate costs is not really fair. This is simply because a container cluster based on EC2 instances will never be utilized to capacity. For example, placing containers of different sizes will cause fragmentation. Also, it is hard to calculate the cost savings by reducing the infrastructure complexity when switching from virtual machines to Fargate.

There is another aspect that you should take into consideration. Fargate for ECS comes with three different pricing models:

- On-demand, that’s the default pricing model that we have discussed above. Pricing is per second with a 1-minute minimum.

- Savings Plans offer a simple deal. You commit to a certain usage, AWS provides you a discount of up to 50% compared to the on-demand price.

- Spot, allows you to benefit from spare capacity within AWS’s infrastructure. You get a discount of 68.87% compared to the on-demand price. In return your containers might be terminated by AWS 2 minutes after sending an interruption notification.

Unfortunately, Fargate for EKS does not support Spot.

Infrastructure Scaling

As discussed above ECS Cluster Auto Scaling and EKS Managed Node Group simplify the task of managing a fleet of EC2 instances. In general, both tools solve the same problem. However, there are two important differences:

- If necessary the EKS Managed Node Group will move containers to another virtual machine to be able to decrease the overcapacity within the cluster. ECS Cluster Auto Scaling does not consider moving a container to another virtual machine to reduce the cluster size at all.

- AWS maintains pre-configured AMIs for ECS and EKS. However, the ECS Cluster Auto Scaling does not support a rolling-update to update to the latest AMI. On the other hand, the EKS Managed Node Group comes with rolling updates for the virtual machines by default.

Therefore, EKS is the better choice when running your container workload on top of EC2. However, I highly recommend avoiding spinning up EC2 instances for your container cluster at all.

Scaling the underlying infrastructure is no longer your job when using Fargate. Instead, AWS is responsible for managing the underlying machines.

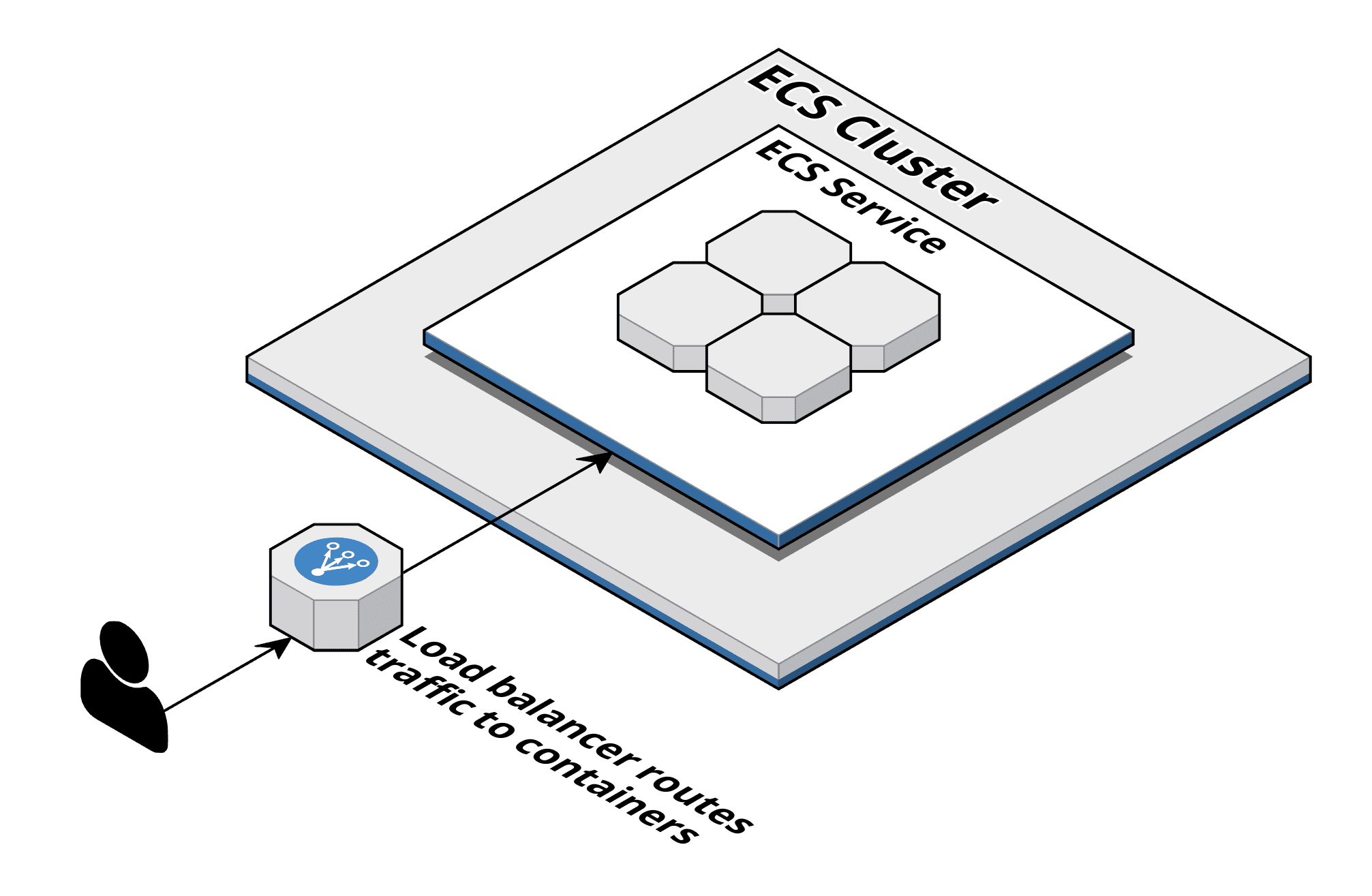

Load Balancing and Service Discovery

Whenever feasible, I go with a managed service instead of operating a service on my own. That’s true for load balancing as well.

AWS offers two kinds of managed load balancers:

- Application Load Balancer (ALB) operates on layer 7 and is capable of routing incoming HTTP/HTTPS requests to a target.

- Network Load Balancer (NLB) operates on layer 4 and routes TCP and UDP connections to a target.

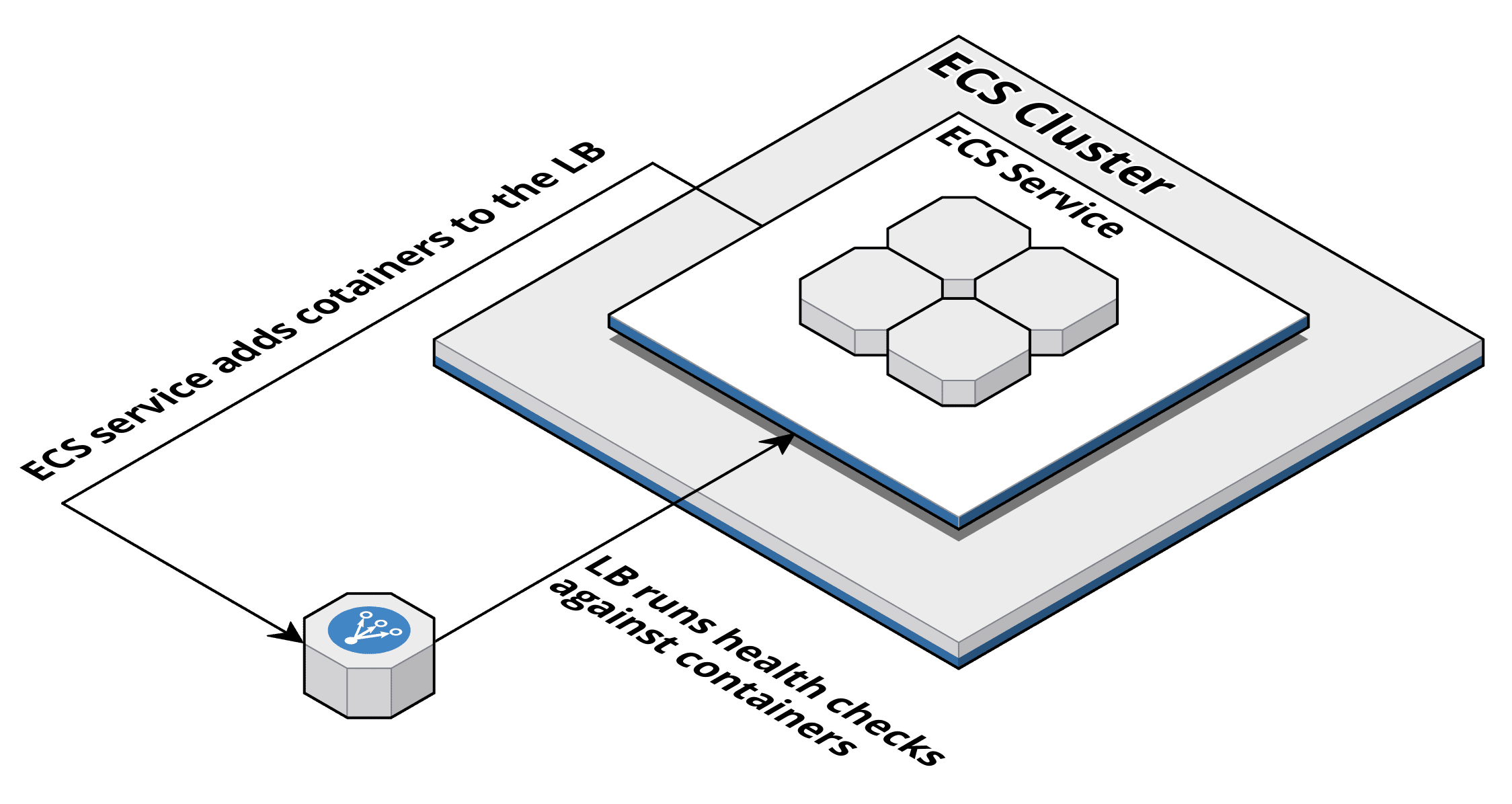

An ECS service will launch containers and register them at an ALB/NLB out-of-the box. With EKS you can achieve a very similar behaviour by using the ALB Ingress Controller for Kubernetes.

Kubernetes is known for its built-in service discovery. By default, kube-proxy will route inter-service requests within the cluster. For example, a container of the frontend service can easily send a request to the backend service, without needing to know which containers serve requests for the backend service.

When discussing the differences between ECS and EKS, I’ve often heard that ECS does not provide service discovery at all. However, that is not correct.

By default, ECS integrates with a service called AWS Cloud Map, which offers service discovery over an API or DNS. An ECS service will register new containers at Cloud Map. Other services are then able to resolve the service’s IP addresses via DNS.

Both ECS and EKS integrate with AWS App Mesh. Doing so allows you to build a service mesh based on the open source Envoy proxy.

Summary and Comparison

The following table summarizes the similarities and differences discussed above. Personally, I still prefer ECS for most scenarios.

| ECS + Fargate | EKS + Fargate | ECS + Cluster Auto Scaling | EKS + Managed Node Group | |

|---|---|---|---|---|

| Deployment Options | ⚠️ AWS only | ⚠️ AWS only | ⚠️ AWS only (except ECS Anywhere) | ✅ Major cloud providers, On-premises, … |

| Availability | ✅ All AWS regions | ⚠️ Available in 18 regions | ✅ All AWS regions | ✅ Available in most regions |

| Cluster Costs (per month) | $ 0.00 | $ 72.00 | $ 0.00 | $ 72.00 |

| Compute Costs | 💰💰 | 💰💰 | 💰 | 💰 |

| Compute Pricing Options | ✅ On-demand | ✅ On-demand | ✅ On-demand | ✅ On-demand |

| Virtual Machines | ✅ Does not involve managing EC2 instances. | ✅ Does not involve managing EC2 instances. | ⚠️ EC2 instances running in your AWS account. ECS Cluster Auto Scaling does not cover all aspects of operating virtual machines (e.g. rolling update to update AMI). | ⚠️ EC2 instances running in your AWS account, but Managed Node Group + Cluster Autoscaler automate most operations tasks. |

| Infrastructure Scaling | ✅ AWS takes care of the scaling of the infrastructure. | ✅ AWS takes care of the scaling of the infrastructure. | ⚠️ ECS Cluster Auto Scaling works fine for scaling up. But will not scale down automatically in most scenarios. | ✅ Managed Node Groups and Cluster Autoscaler scale the cluster capacity up and down automatically. |

| Networking | ✅ ENI and Security Group per task/container. | ✅ ENI and Security Group per pod/container. | ✅ ENI and Security Group per task/container. | ⚠️ Multiple containers/pods share the same ENI and Security Group. |

| Load Balancing | ✅ ALB + NLB | ✅ ALB | ✅ ALB + NLB + CLB | ✅ ALB + NLB + CLB |

| Service Discovery | ✅ Cloud Map | ✅ K8s | ✅ Cloud Map | ✅ K8s |