EKS vs. ECS: orchestrating containers on AWS

AWS announced Kubernetes-as-a-Service at re:Invent in November 2017: Elastic Container Service for Kubernetes (EKS). Since yesterday, EKS is generally available. I discussed ECS vs. Kubernetes before EKS was a thing. Therefore, I’d like to take a second attempt and compare EKS with ECS.

Before comparing the differences, let us start with what EKS and ECS have in common. Both solutions are managing containers distributed among a fleet of virtual machines. Managing containers includes:

- Monitoring and replacing failed containers.

- Deploying new versions of your containers.

- Scaling the number of containers based on load.

What are the differences between EKS and ECS?

Load Balancing

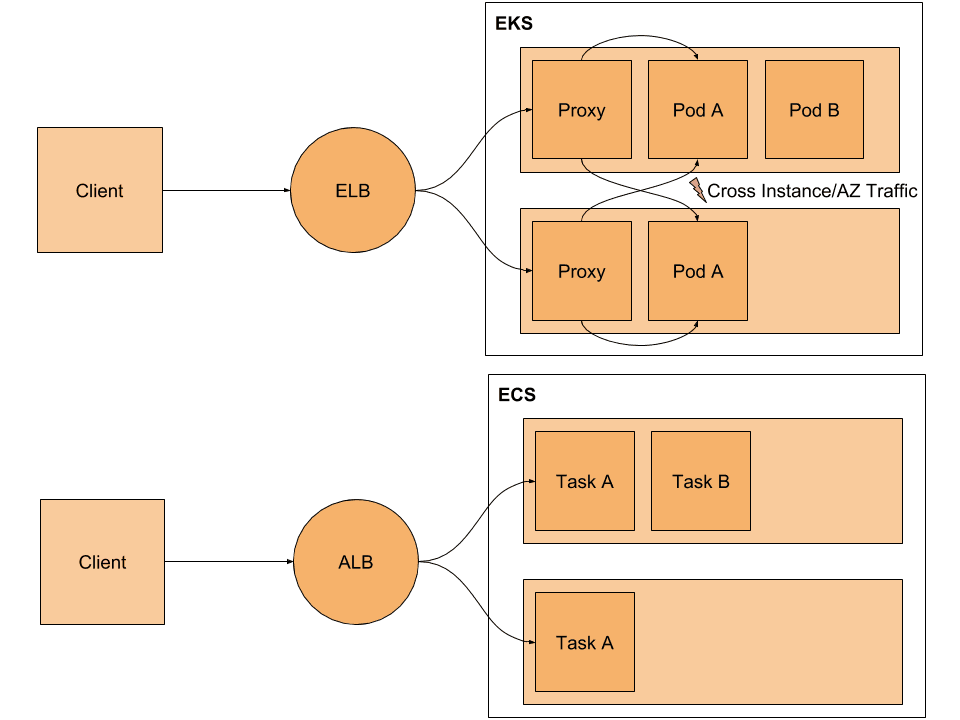

Usually, a load balancer is as the entry point into your AWS infrastructure. Both EKS and ECS offer integrations with Elastic Load Balancing (ELB).

On the one hand, Kubernetes - and therefore EKS - offers an integration with the Classic Load Balancer. Support for the Application Load Balancer and Network Load Balancer are available as beta releases. When creating a service Kubernetes does also create or configure a Classic Load Balancer for you.

- The client sends a request to ELB.

- ELB distributes the request to one of the nodes also known as EC2 instances.

- A proxy running on the node is forwarding the request to one of the pods providing the service.

On the other hand, ECS provides an integration with the Application Load Balancer (ALB), the Network Load Balancer (NLB) as well as the Classic Load Balancer (CLB). When using the ALB, the flow for each incoming request needs only two instead of three steps.

- The client sends a request to the ALB.

- ALB forwards request to one of the tasks providing the service.

The following figure illustrates the difference.

The proxy running on each node is distributing requests randomly or based on the round robin algorithm among all pods running in the cluster. Doing so increases the network traffic between EC2 instances and between AZs which consumes network capacity and adds latency.

In contrast, the tight integration between ECS and ALB does not require a third routing step and is, therefore, more efficient.

VPC and ENI

Being able to integrate containers running on EKS or ECS into your VPC is excellent. Both EKS and ECS allow attaching an Elastic Network Interface (ENI) to containers. However, there is a slight difference between VPC mode with EKS and ECS.

As shown in the following figure EKS is attaching multiple ENIs per instance. Multiple private IP addresses are assigned to each ENI. EKS assigns each pod - a group of containers - a private IP address. However, some pods are sharing network interfaces with each other. That is different with ECS as each task - a group of containers - is assigned to a separate ENI.

The number of ENIs per instance is limited from 2 to 15 depending on the instance type. As EKS is sharing ENIs between pods, you can place up to 750 pods per instance. Much more than the maximum of 15 tasks you can place per instance with ECS.

But sharing ENIs between instances comes with a limitation as well. You are not able to restrict traffic with a security group per pod, as the ENI and therefore the security group is shared with multiple pods.

IAM

ECS supports IAM Roles for Tasks which is great to grant containers access to AWS resources. For example, to allow containers to access S3, DynamoDB, SQS, or SES at runtime. Unfortunately, EKS does not support IAM for pods out-of-the-box at the moment.

Pricing

Each EKS cluster costs you 0.20 USD per hour which is about 144 USD per month. ECS is free. For both, EKS and ECS you have to pay for the underlying EC2 instances and related resources.

Compatibility

EKS offers Kubernetes-as-a-Service for AWS. However, Kubernetes is an option at other cloud providers, on-premises, or even on your developer machine. To put it in other words: Kubernetes offers you a layer of abstraction allowing you to deploy your applications on top of any infrastructure.

Whereas, ECS is only available on AWS.

Summary

As for now, ECS offers a much deeper integration into the AWS infrastructure than EKS. A strong argument for EKS is the possibility to use the same technology at other cloud providers or on-premises.