GitHub Actions vs. AWS CodePipeline

Deployment pipelines first! When launching a new project, start with building a deployment pipeline. Automating the process from changing the source code to shipping your application to the production environment is a success factor.

But how to build a deployment pipeline? This post compares two different approaches: GitHub Actions and AWS CodePipeline.

GitHub has been hosting source code for more than ten years. On top of that, GitHub announced their CI/CD service called GitHub Actions to the public in November 2019.

AWS empowers developers with its continuous delivery service CodePipeline since July 2015. About a year later, AWS announced an essential add-on: CodeBuild. When I write about CodePipeline in the following, I always refer to a combination of CodePipeline and CodeBuild. So to be more accurate, the title of this blog post should be: GitHub Actions vs. CodePipeline and CodeBuild.

Overview

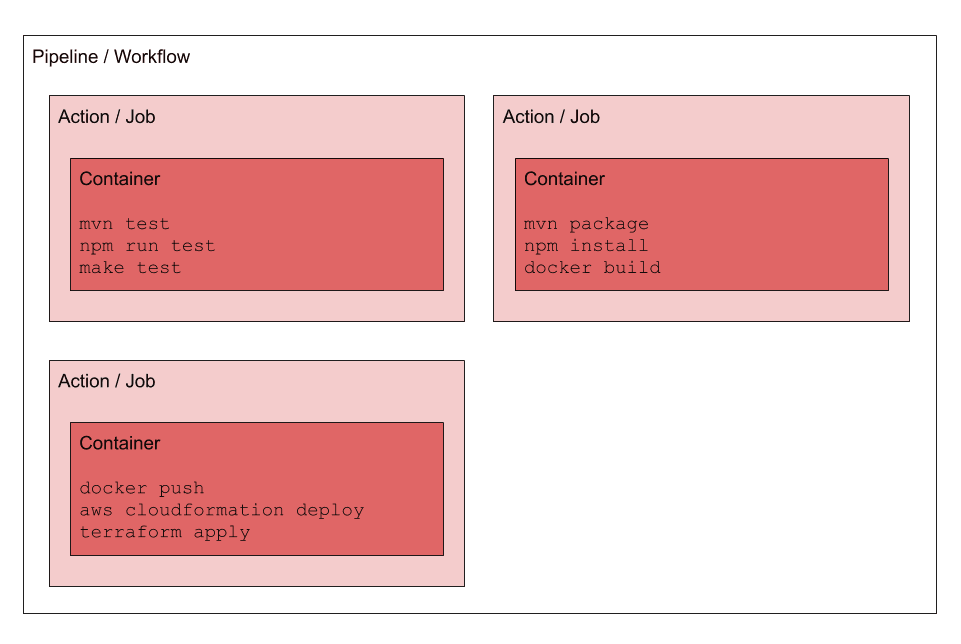

Both GitHub Actions and AWS CodePipeline use similar concepts to provide a deployment pipeline:

- Workflow Management: customize the deployment workflow to your needs by arranging actions in parallel or order.

- Isolated Job Execution: use pre-build or custom container images to provide an isolated environment for executing actions (e.g., a build job).

In summary, the main concepts are similar, but what are the differences?

Comparison

The following table compares GitHub Actions and AWS CodePipeline. To achieve better comparability, I examine the GitHub-hosted runner with 2 CPU and 7 GB memory with the CodeBuild compute type general1.medium, which comes with 4 CPU and 7 GB memory.

| GitHub Actions | AWS CodePipeline | |

|---|---|---|

| Free Tier (Linux) | free for public repositories | 50 minutes per month |

| Costs for Pipeline | free | $1.00 per month |

| Costs for Build Job | $0.008 per minute | $0.010 per minute |

| Environments | Linux, Windows, macOS | Linux, (Windows) |

| Source Code Repository | GitHub | GitHub, Bitbucket, AWS CodeCommit, Amazon S3 |

| Deployment Target | Any environment, works best for public cloud providers. | Mainly AWS, in theory you could deploy to other cloud providers as well. |

| AWS Authentication | Store access keys of IAM user in GitHub Secrets | IAM role, no need to manage access keys |

| Developer Experience | 🏖 Easy to use | 🤷♀️ Difficult to get started |

| Integrations | Open marketplace providing all kinds of integrations of mixed quality. | Pre-built and high quality integrations into the AWS ecosystem. |

| Self-hosted Workers? | ✅ | ⚠️ with custom actions and custom code |

| Serialize pipeline executions | ✅ | ✅ |

| Support for multiple branches? | ✅ | ❌ |

| Pipeline as Code | ✅ | ⚠️ with custom CloudFormation or Terraform and CodeBuild action |

GitHub Actions offers an outstanding developer experience. As long as you host your source code on GitHub, the solution is flexible, not only because of the integrations (aka actions) offered in the open marketplace. AWS CodePipeline integrates very well into the AWS ecosystem. Being able to use IAM roles for authentication instead of fiddling around with access keys for IAM users is a big plus.

Integrations

AWS CodePiline comes with the following pre-built integrations:

- AWS CloudFormation deploy your Infrastructure as Code templates

- AWS CodeBuild run any kind of build job inside a container

- AWS CodeDeploy deploy to a fleet of EC2 instances

- Amazon Elastic Container Service (ECS) deploy your containers with ECS

- AWS Elastic Beanstalk deploy your app on Amazon’s PaaS platform

- AWS OpsWorks Stacks deploy your app based on Chef cookooks and recipes

- AWS Service Catalog deploy a product

- Alexa Skills Kit deploy your skill

- AWS Lambda invoke customized source code

- AWS Device Farm run UI tests on mobile devices

It worth mentioning that the integration with AWS CodeBuild allows you to run any script inside a container based on a pre-built or customized image. This provides maximum flexibility. On top of that, a few 3rd party services are integrated as well.1

GitHub has taken a different approach: its open marketplace lists more than 2,000 integrations (aka actions)2. Well known and trusted organizations (e.g., GitHub itself also AWS), as well as individuals, publish their actions. A few examples:

- cfn-lint-action by Scott Brenner enables arbitrary actions for interacting with CloudFormation Linter.

- amazon-ecr-login by AWS logs in the local Docker client to one or more ECR registries.

- cache by GitHub allows caching dependencies and build outputs to improve workflow execution time.

Be careful when adding 3rd party actions to your deployment pipeline. First of all, you need to 100% trust the code that you are adding to your deployment pipeline. In theory, someone could insert malicious code or steal your AWS credentials by publishing a trojan horse to the GitHub Marketplace. Also, a 3rd party action could be removed from the GitHub Marketplace, which will break your deployment pipeline out of a sudden.

Luckily, it is not very hard to implement your own GitHub Actions.

- Node.js run some JavaScript code

- Docker run anything that you have packaged into a Docker image before

The following example shows how to build a GitHub Action to deploy a CloudFormation stack. I’ve decided to use a Docker-based action, as this approach is very flexible.

First of all, you need to define a Dockerfile. The Dockerfile defines the base image, installs build dependencies, and adds the entrypoint.sh script, which will be executed on each action invocation.

# Base image to start from |

Use the entrypoint.sh script to customize your deployment. In this simple example, all we need to do is to execute the aws cloudformation deploy command.

|

One tiny bit is missing. GitHub Actions requires you to describe your action in an action.yml file. The configuration file includes a name and description for your action. On top of that, the action.yml file tells GitHub to run the action inside a Docker container. GitHub builds the container on-the-fly with the help of your Dockerfile.

name: 'Deploy CloudFormation Stack' |

That is a simple way to define custom actions for your deployment pipeline.

Configuration

How to configure a deployment pipeline? Define your pipeline as code. My blog post Delivery Pipeline as Code: AWS CloudFormation and AWS CodePipeline is still relevant. A lot of know-how and learnings will be baked into your deployment pipeline. Make sure that knowledge is written down in code so that you can reproduce it whenever necessary.

GitHub requires you to define your workflow within your source code repository. The following code snippet shows the definition of the deployment pipeline used to deploy this website.

- Check out the source code.

- Read the access keys for AWS from the repository secrets.

- Execute the custom deploy action described in the previous section.

on: |

Use AWS CloudFormation or Terraform to define your AWS CodePipeline. The following example shows a CodePipeline written down as a CloudFormation template.

- Checkout source code.

- Deploy the CloudFormation stack.

# [...] |

For me, it is most important to define your deployment pipeline as code. Both approaches are similar to my point of view.

AWS Credentials

Your deployment pipelines need administrator access to be able to deploy your application and infrastructure to AWS. In general, you should avoid IAM users with access keys wherever possible. However, using access keys to authenticate an IAM user with far-reaching authorizations is the only option to execute a deployment with GitHub Actions. Of course, GitHub offers a way to store the AWS access keys in a secure and encrypted manner. A residual risk remains. On top of that, you need to rotate the access keys regularly to follow security best practices and compliance rules.

AWS CodePipeline has an advantage here. Instead of using static access keys, make use of IAM roles, which provide short-living access keys out-of-the-box. You can even define different IAM roles for various actions in your pipeline, which allows you to implement the least privilege principle.

Vendor Lock-in

In my view, there is no significant vendor lock-in for deployment pipelines. Make sure to put the real magic to custom actions running inside Docker containers. Doing so allows you to switch between CI/CD solutions very quickly. For example, I’ve migrated the deployment pipeline for this website from Jenkins to GitHub Actions within 2 hours.

It is worth mentioning that GitHub Actions works with your source code hosted on GitHub only. AWS CodePipeline works with your source code hosted on AWS CodeCommit, GitHub, or Amazon S3 (can be used as a workaround to integrate with any source code repository).

Summary

I like the experience that GitHub Actions are providing for building deployment pipelines. The deep integration into my development workflow (e.g., pull requests) is excellent. Also, GitHub Actions are easy to use. On the other hand, I’m missing the integrations into the AWS ecosystem that I’m used to from using AWS CodePipeline. However, the main concepts behind GitHub Actions and AWS CodePipeline are similar. Therefore, the details are decisive in the selection.

Further reading

- Article Show your Tool: Parliament

- Article AWS Cost Optimization 101

- Article Dockerizing Ruby on Rails

- Tag codepipeline

- Tag codebuild

- Tag highlight