Improve Security (Groups) using VPC Flow Logs & AWS Config

As mentioned in the previous post Your AWS Account is a mess? Learn how to fix it!, most AWS accounts are a mess. This can be a serious risk, especially for security-related resources like Security Groups. In this post, we will describe a technique to make the existing Security Group rules as strict as possible using data from VPC Flow Logs and AWS Config. We will focus on inbound rules but the concept works similarly for outbound rules.

Concept

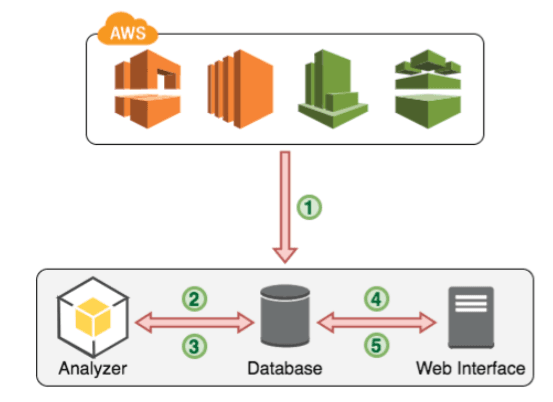

Security Group rules often allow more than they should due to various reasons like inexperience, ignorance or simply obsolete/forgotten rules. Our main idea is to compare the possible traffic (e.g. the traffic which can occur according to the defined rules) with the real traffic occurred in an account. The following figure demonstrates this idea.

At first, all needed data from AWS APIs (VPC, EC2, CloudWatch, Config) is fetched and imported in a database (1). To every flow in the database, we try to assign the corresponding security groups(s). An analyzation tool then analyzes this data (2). By comparing the flows belonging to a security group with the rules from this group, we can check if a rule is too weak or not needed at all. The algorithm performing this task is shown in figure 4.2. With this information, we can generate rule sets following the principle of least privilege, which are written to the database (3). These rules should then be presented in a web interface (4) for review and applying (5). If a suggested rule is applied, these changes should be accomplished in the AWS account.

VPC Flow Logs

To get information about the traffic in an account we use VPC Flow Logs. Flow Logs are some kind of log files about every IP packet which enters or leaves a network interface within a VPC with activated Flow Logs. As Flow Logs are disabled per default, we first need to enable it. Now we have to wait a few days or weeks until an appropriate amount of logs has ben generated to work with. With more logs over a greater timespan, our analysis gets more and more accurate.

This is an example Flow Log record:

2 123456789010 eni-abc123de 172.168.1.12 19.22.5.77 20641 22 6 20 4249 1418530010 1418530070 ACCEPT OK

The record shows traffic in the account 123456789010, on the interface eni-abc123de, sending 4249 bytes in 20 packets, from 172.168.1.12 port 20641 to 19.22.5.77 port 22. This is the information we work with.

An important aspect here is that we have no TCP-Flags or payload data. With this additional data, we could make a much deeper analysis of the traffic occurred.

For later analysis, all records are imported into a database. A simplified example entry in our database looks like this:eni-abc123de | 172.168.1.12 | 20641 | 19.22.5.77 | 22

To compare this data with the Security Group rules, we need to know which Flow Log record belongs to which Security Group. This association can be made by using the interface-id available in every Flow Log Record. For running instances we simply can retrieve information about the interface-id and to which Security Groups an instance belongs over the AWS APIs and make the connection from Flow Log Record to Security Group.

AWS Config

In highly dynamic environments instead, it is possible that we find interface-ids belonging to instances already terminated. This means we have no data about which Security Groups were assigned to this instance and so we can not enrich this particular Flow Log Record with this information. Here the AWS Config service comes in. After a brief set up, this service periodically takes snapshots of your whole infrastructure configuration and saves them to an S3 bucket. These JSON files now contain all the information we need, even for terminated instances.

After assigning the corresponding Security Groups, our simplified example entry looks like this:eni-abc123de | 172.168.1.12 | 20641 | 19.22.5.77 | 22 | [sg-321, sg-654]

Analysis

With all needed data in our database, we can start analyzing. For every rule in every Security Group, we make a query against our database. Here are some examples.

No-Traffic Example

Imagine the following rule: sg-123: allow port 25, TCP, from 192.168.22.19/32

Our query for this looks like: select * from flowlogs where secgroup='sg-123' and port=25 and protocol=6 and from='192.168.22.19/32'

If this query returns 0 results/rows, we know that there was no traffic belonging to this rule. A review by a human should be considered to evaluate if this rule is really needed or should be removed.

Wildcard Example

Another example for the following wildcard rule: sg-123: allow port 80, TCP, from 0.0.0.0/0

Our query for this looks like: select * from flowlogs where secgroup='sg-123' and port=80 and protocol=6

Now suppose we get some results. To check if this rule should really be a wildcard rule, we analyze the from field in the results. If there are more sources than a configured threshold, the wildcard is considered as acceptable. But if there are just a few sources, our system tries to suggest something stricter than a wildcard source, maybe networks or even single addresses.

Smaller Source Example

Now we work with this rule: sg-123: allow port 80, TCP, from 10.0.0.0/8

Our query for this looks like: select * from flowlogs where secgroup='sg-123' and port=80 and protocol=6 and from='10.0.0.0/8'

If we get results, we analyze the from field like in the wildcard example. This time, we calculate the smallest possible network (in CIDR-notation) all these IP addresses belong to. For example, if we just get addresses between 10.0.0.0 and 10.0.0.255 in the from field, we would suggest to changing the rule allow traffic just from 10.0.0.0/24 (255 Hosts) instead of 10.0.0.0/8 (~16.7 million Hosts) before.

Real-World Results

We tested this concept/system on 3 different real-world datasets and got convincing results in each of them. After a review of the suggested rules through the operators of the respective AWS account, many of them were applied to the production environments.

Get early access

We are looking for AWS users willing to test our tool. Now is your chance to get a free data-driven review of your firewall configuration on AWS. Get in touch with us.

Further reading

- Article Your AWS Account is a mess? Learn how to fix it!

- Article DIY AWS Security Review

- Article 5 AWS mistakes you should avoid

- Article DevOps and Security #c9d9

- Article Event Driven Security Automation on AWS

- Tag vpc

- Tag security

- Tag config