Prevent outages with redundant EC2 instances

Unfortunately, EC2 instances aren’t fault-tolerant. Under your virtual server is a host system. These are a few reasons your virtual server might suffer from a crash caused by the host system:

- If the host hardware fails, it can no longer host the virtual server on top of it.

- If the network connection to/from the host is interrupted, the virtual server loses the ability to communicate via network as well.

- If the host system is disconnected from a power supply, the virtual server also goes down.

But the software running on top of the virtual server may also cause a crash:

- If your software has a memory leak, you’ll run out of memory. It may take a day, a month, a year, or more, but eventually it will happen.

- If your software writes to disk and never deletes its data, you’ll run out of disk space sooner or later.

- Your application may not handle edge cases properly and instead just crashes.

Regardless of whether the host system or your software is the cause of a crash, a single EC2 instance is a single point of failure. If you rely on a single EC2 instance, your system will blow up: the only question is when.

Redundancy can remove a single point of failure

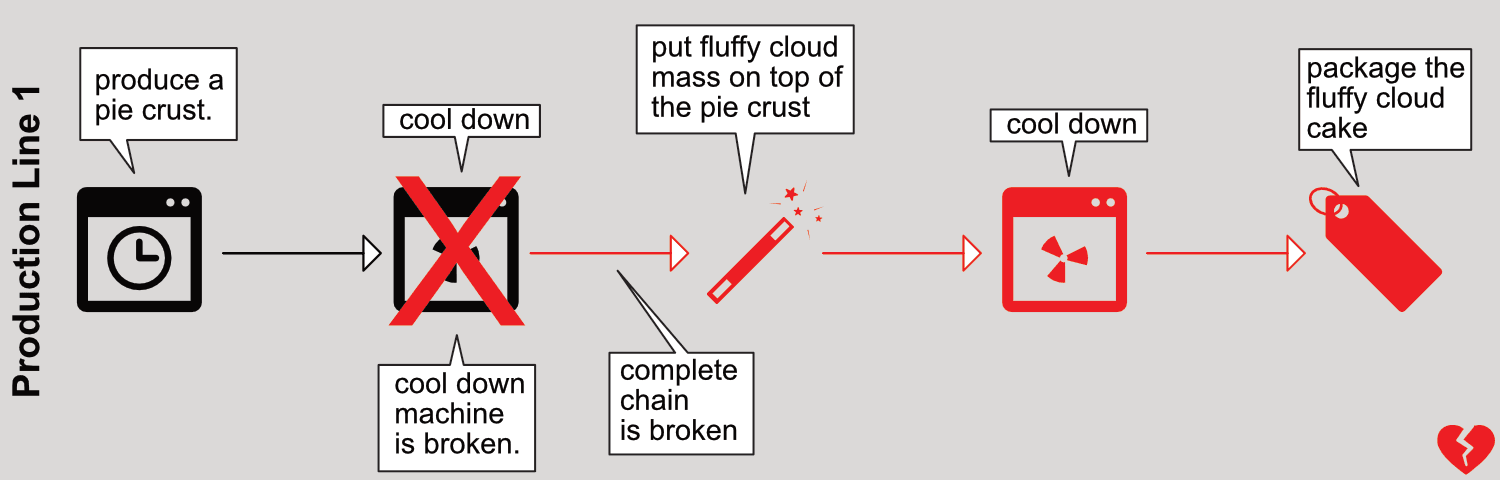

Imagine a production line that makes fluffy cloud pies. Producing a fluffy cloud pie requires several production steps (simplified!):

- Produce a pie crust.

- Cool down the pie crust.

- Put the fluffy cloud mass on top of the pie crust.

- Cool the fluffy cloud pie.

- Package the fluffy cloud pie.

The current setup is a single production line. The big problem with this setup is that whenever one of the steps crashes, the entire production line must be stopped. Figure 1 illustrates the problem when the second step (cooling the pie crust) crashes. The following steps no longer work either, because they don’t no longer receive cool pie crusts.

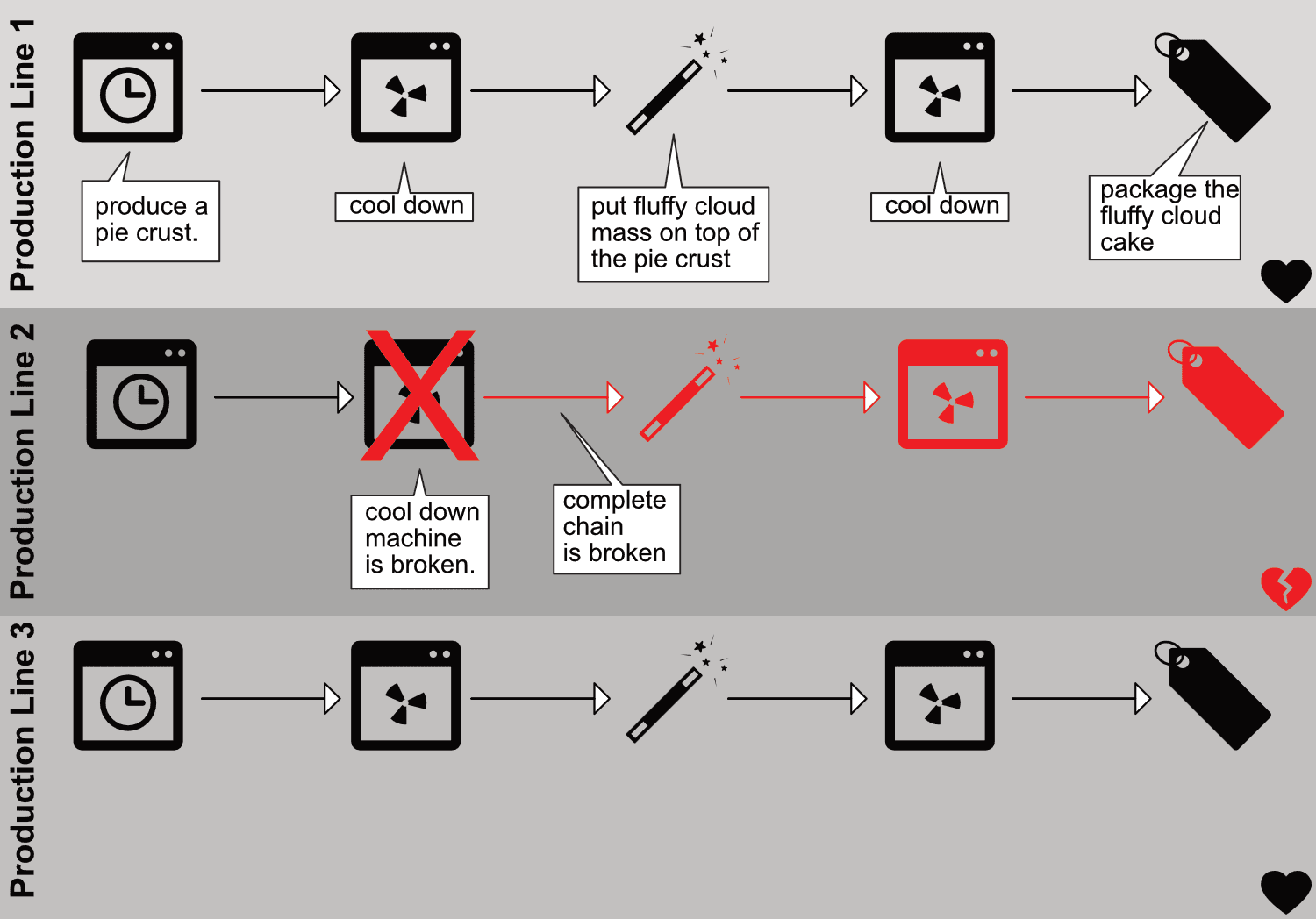

Why not have multiple production lines? Instead of one line, suppose we have three. If one of the lines fails, the other two can still produce fluffy cloud pies for all the hungry customers in the world. Figure 2 shows the improvements; the only downside is that we need three times as many machines.

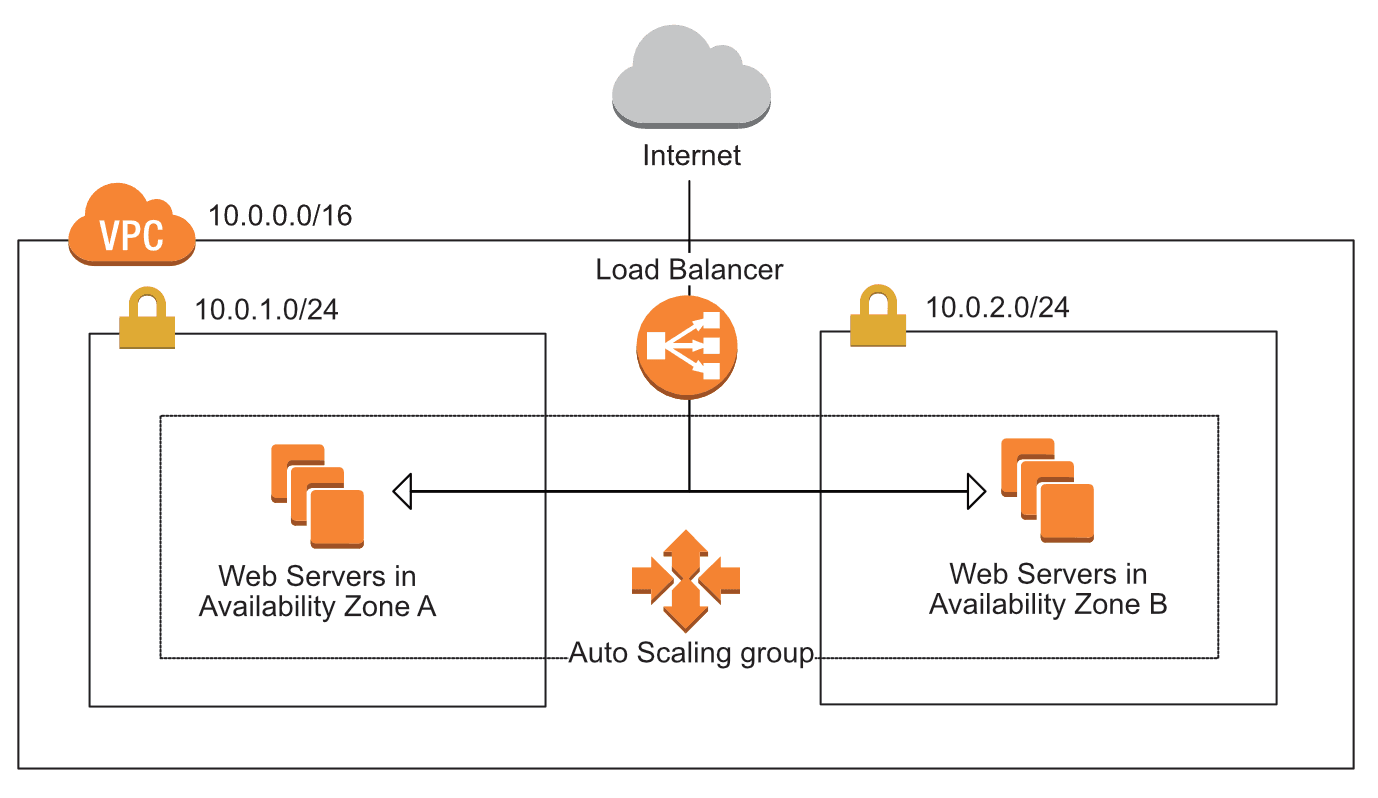

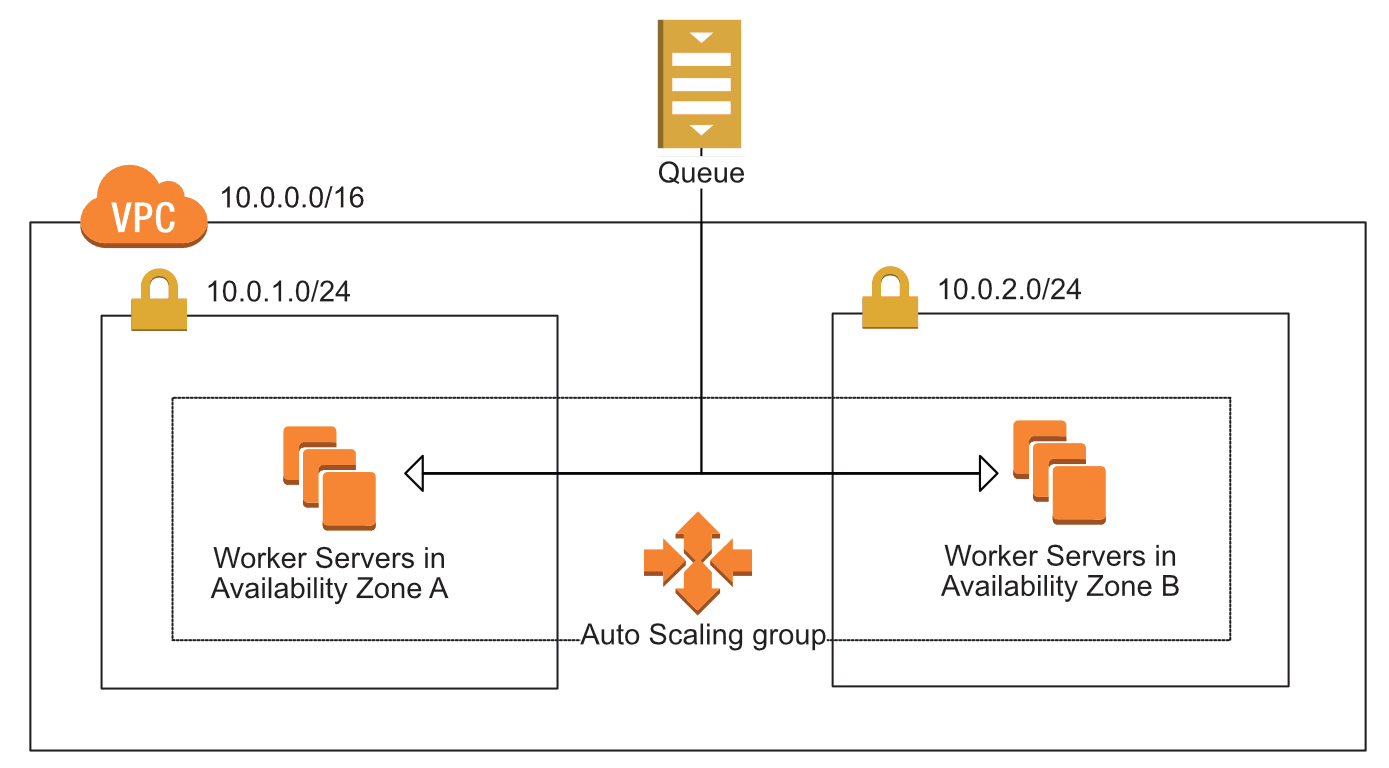

The example can be transferred to EC2 instances as well. Instead of having only one EC2 instance, you can have three of them running your software. If one of those instances crashes, the other two are still able to serve incoming requests. You can also minimize the cost impact of one versus three instances: instead of one large EC2 instance, you can choose three small ones. The problem that arises with a dynamic server pool is, how can you communicate with the instances? The answer is decoupling: put a load balancer between your EC2 instances and the requestor or a message queue. Read on to learn how this works.

Redundancy requires decoupling

Figure 3 shows how EC2 instances can be made fault-tolerant by using redundancy and synchronous decoupling. If one of the EC2 instances crashes, ELB stops to route requests to the crashed instances. The auto-scaling group replaces the crashed EC2 instance within minutes, and ELB begins to route requests to the new instance.

Take a second look at figure 3 and see what parts are redundant:

- Availability zones—Two are used. If one AZ goes down, we still have EC2 instances running in the other AZ.

- Subnets—A subnet is tightly coupled to an AZ. Therefore we need one subnet in each AZ, and subnets are also redundant.

- EC2 instances—We have multi-redundancy for EC2 instances. We have multiple instances in a single subnet (AZ), and we have instances in two subnets (AZs).

Figure 4 shows a fault-tolerant system built with EC2 that uses the power of redundancy and asynchronous decoupling to process messages from an SQS queue.

In both figures, the load balancer / SQS queue appears only once. This doesn’t mean ELB or SQS is a single point of failure; on the contrary, ELB and SQS are fault-tolerant by default.

Introducing redundancy doesn’t necessarily increase your costs. An m3.large instance with 2 CPUs and 7,5 GiB RAM costs exactly the same as two m3.medium instances with 1 CPU and 3,75 GiB of RAM. The only additional costs are created by the decoupling layer: ELB or SQS.