Review: AWS App Mesh – A service mesh for EC2, ECS, and EKS

It seems to me like everyone is talking about service meshes these days - definitely a hot topic in the world of containers and microservices. A service mesh promises reducing latency, increasing observability, and simplifying security within microservice architectures. AWS announced a preview for App Mesh in November 2018 and the general availability in March 2019. Therefore, it is about time to take a closer look at App Mesh. As always, my review focuses on the technical details and educates about pitfalls. There is a lot more to know about the service than written on the official marketing page or demonstrated by technical evangelists.

Do you prefer listening to a podcast episode over reading a blog post? Here you go!

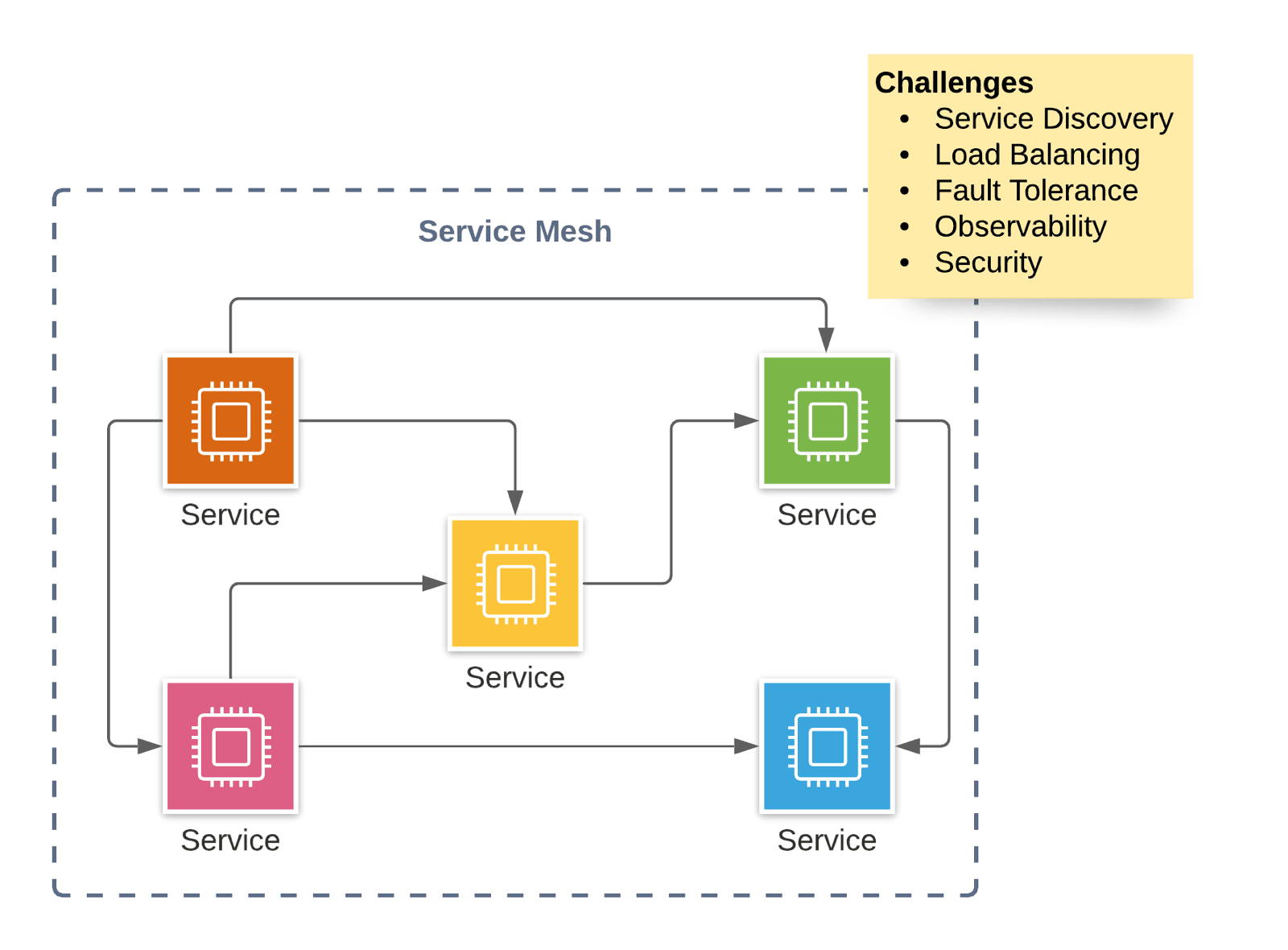

What is a service mesh?

The idea behind a microservice architecture is to split a big problem into many small chunks by building a distributed system. Typical challenges of a microservice architecture are:

- Service Discovery: service A needs to find out how to talk to service B

- Load Balancing: distribute requests among multiple instances of a service (e.g., various containers in a cluster)

- Fault Tolerance: detect failures (health checks, …) and mitigate problems (circuit breaker, …) automatically

- 0-Downtime Deployments: avoid failures during a deployment of a service by using canary blue-green deployment strategies

- Observability: track a request while traveling through the distributed system, monitor the health of each service, detect anomalies within metrics, analyze log messages

- Security: service-to-service authentication and authorization, encrypt data in transit

What is the origin of the term service mesh?

A mesh network […] is a local network topology in which the infrastructure nodes […] connect directly, dynamically, and non-hierarchically to as many other nodes as possible and cooperate with one another to efficiently route data from/to clients. […] Mesh networks dynamically self-organize and self-configure, which can reduce installation overhead. The ability to self-configure enables dynamic distribution of workloads, particularly in the event a few nodes should fail. This in turn contributes to fault-tolerance and reduced maintenance costs. (Source Wikipedia)

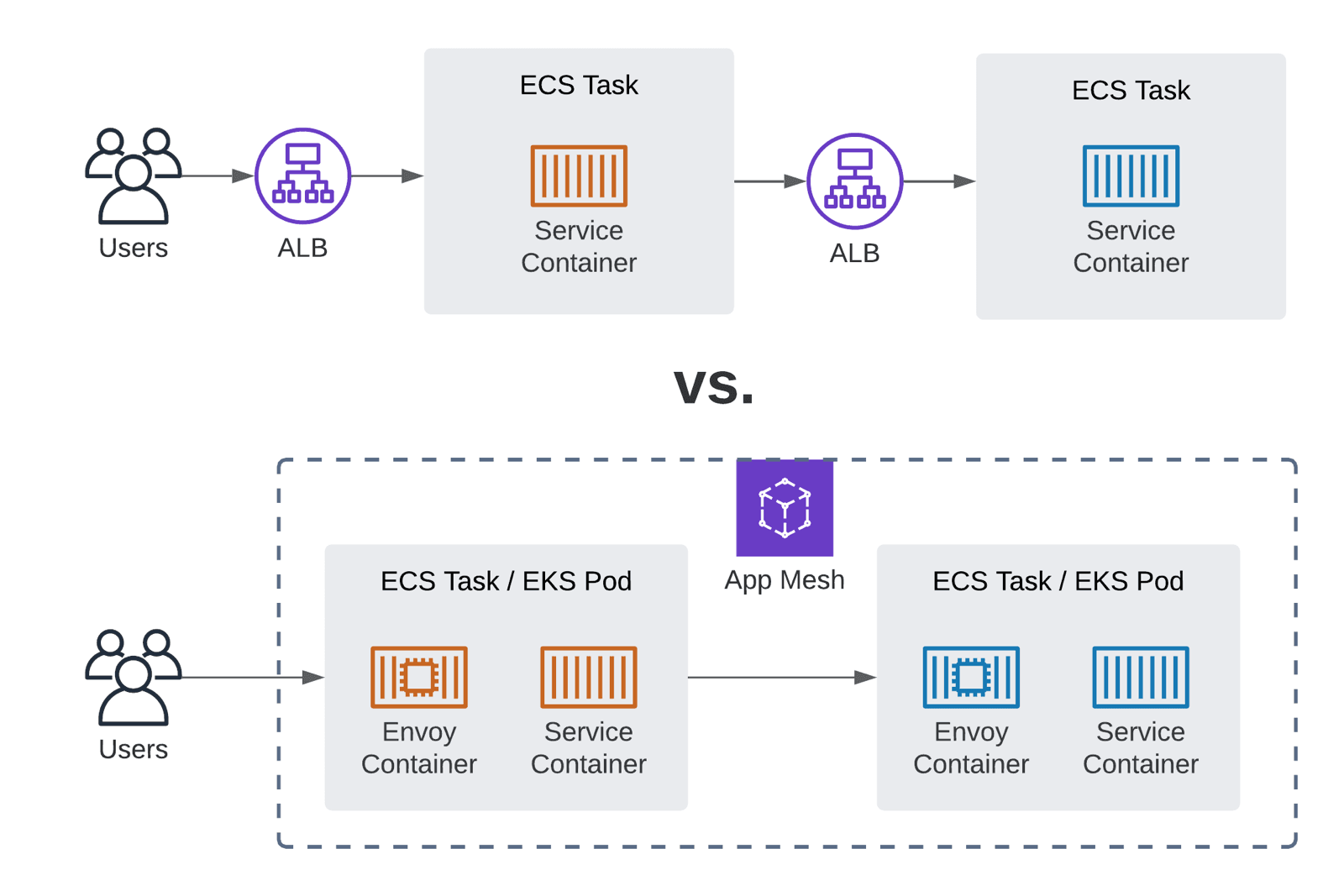

A service mesh translates the idea of a mesh network to a microservice architecture. Services connect directly and route requests dynamically to balance the load and mitigate failure. There is no need for a central authority to orchestrate the service-to-service communication - for example, and there is no need for a load balancer (ALB or NLB).

What is AWS App Mesh?

Use App Mesh to orchestrate a service mesh with compute nodes on ECS, EKS, and EC2. AWS promises to solve all the challenges of a microservice architecture listed above with App Mesh.

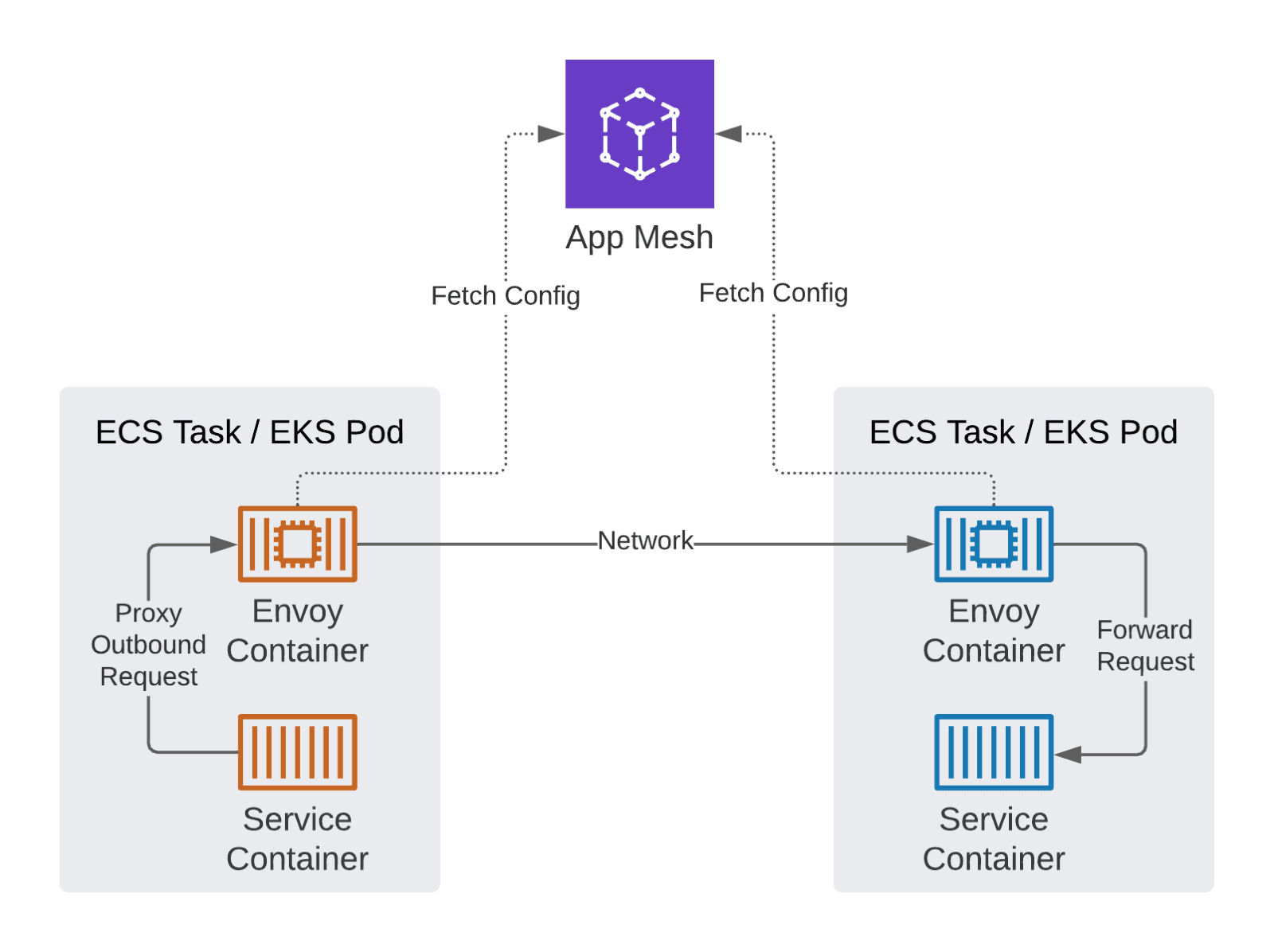

But how does App Mesh work? First of all, App Mesh utilizes Envoy, an L7 proxy and communication bus designed for large modern service-oriented architectures.1 The idea behind Envoy is that all the logic for service-to-service communication is deployed as a sidecar container. The services do not need to implement that functionality themselves. All App Mesh is doing is to provide Envoy configuration. On top of that, App Mesh integrates with many AWS services like Cloud Map, Certificate Manager, CloudWatch, and X-Ray.

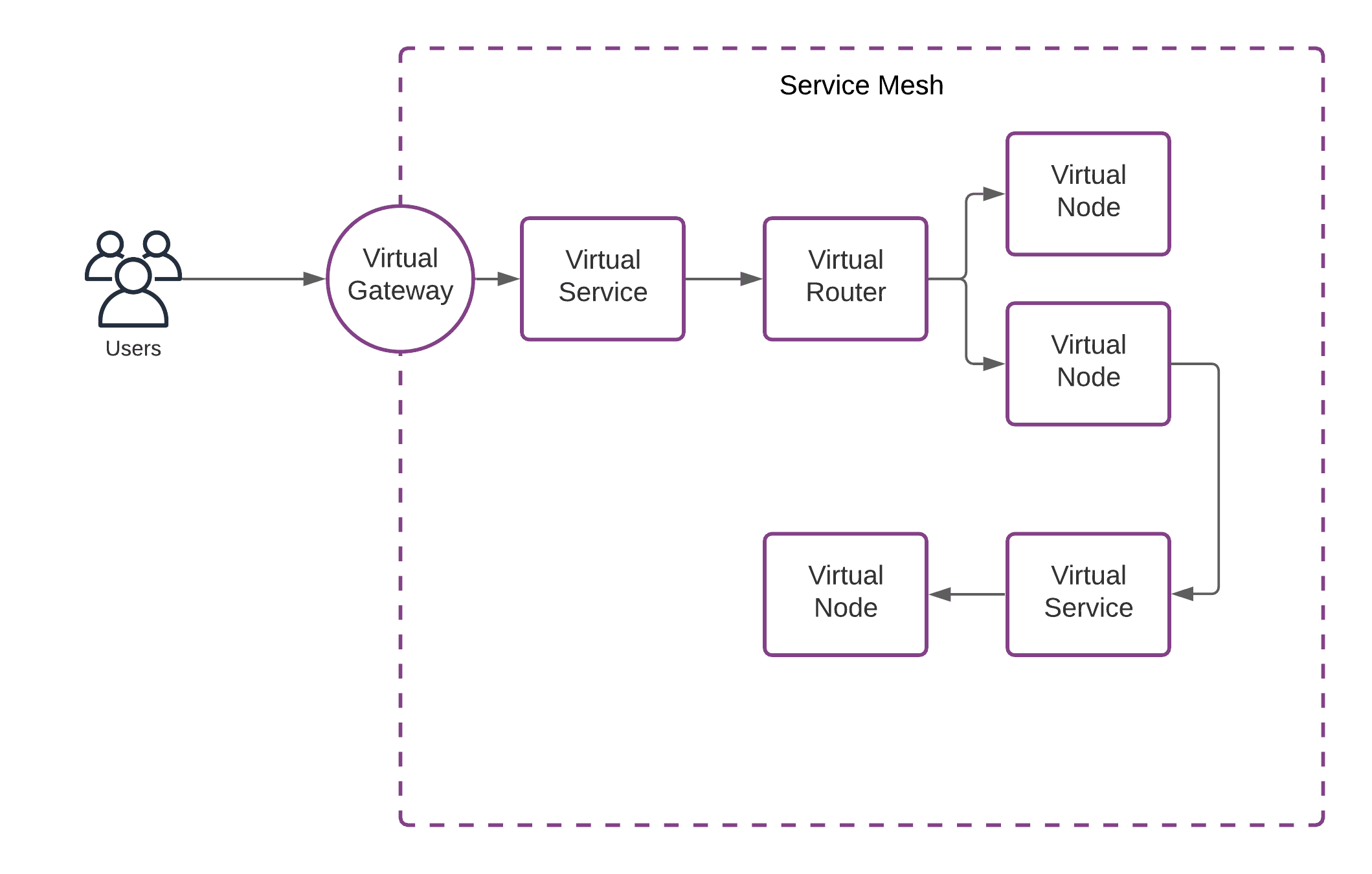

Let me introduce the basic concepts of App Mesh to you.

- The Service Mesh is the logical boundary of your microservice environment.

- A Virtual Service is the abstract representation of a service.

- A Virtual Router optionally routes requests of a service to different nodes depending on the request details (e.g., path).

- A Virtual Node is the target for requests/connections of a service and a pointer to an ECS or EKS service, for example.

- A Virtual Gateway - an ingress gateway - allows resources from outside the mesh to connect to a service.

By the way, popular alternatives to AWS App Mesh for Kubernetes are: Consul, Istio, and Linkerd.

App Mesh for ECS: Walkthrough

I want to take you on a journey on what is needed to get App Mesh up and running for ECS use.

- VPC: Although App Mesh is about application-level networking, you still need to configure subnets, route tables, and security groups under the hood.

- ECS: Obviously, you need to create an ECS cluster and services.

- Cloud Map: App Mesh requires Cloud Map for service discovery. Therefore, you need to configure a namespace and a service.

- Certificate Manager: Optionally, you need to create a private certificate authority to issue certificates for encrypting data in transit.

- App Mesh: On top of that, you need to configure App Mesh itself. The required resources are mesh, virtual service, and virtual node.

On top of that, you need to add three sidecar containers to every ECS service’s task definition.

- Envoy Proxy

- CloudWatch Agent

- X-Ray Daemon

Over the years, I have familiarized myself with many new AWS services. To build my example with App Mesh, I spent a lot of time. In my opinion, App Mesh is complicated to use. Many building blocks must be put together correctly. Unfortunately, the official documentation is not very helpful as well.

Fully managed?

A fundamental problem with App Mesh, in my opinion, is that it is not a service fully managed by AWS. For example, you need to deploy one to three sidecar containers for each service to your infrastructure (ECS, EKS, or EC2). You are responsible for detecting and fixing problems with these sidecar containers on your own. For example, a sidecar container could run out of memory.

The line between the part of App Mesh that is fully managed by AWS and the part that we, as a customer, have to run ourselves is not 100% clear. This makes troubleshooting difficult, especially in case of problems. Cloud services that are not fully encapsulated behind an API are problematic, in my opinion.

In theory, App Mesh could evolve to become a fully managed service in the future. For example, I can imagine AWS to deploy the sidecar containers transparently when launching a task or pod on Fargate.

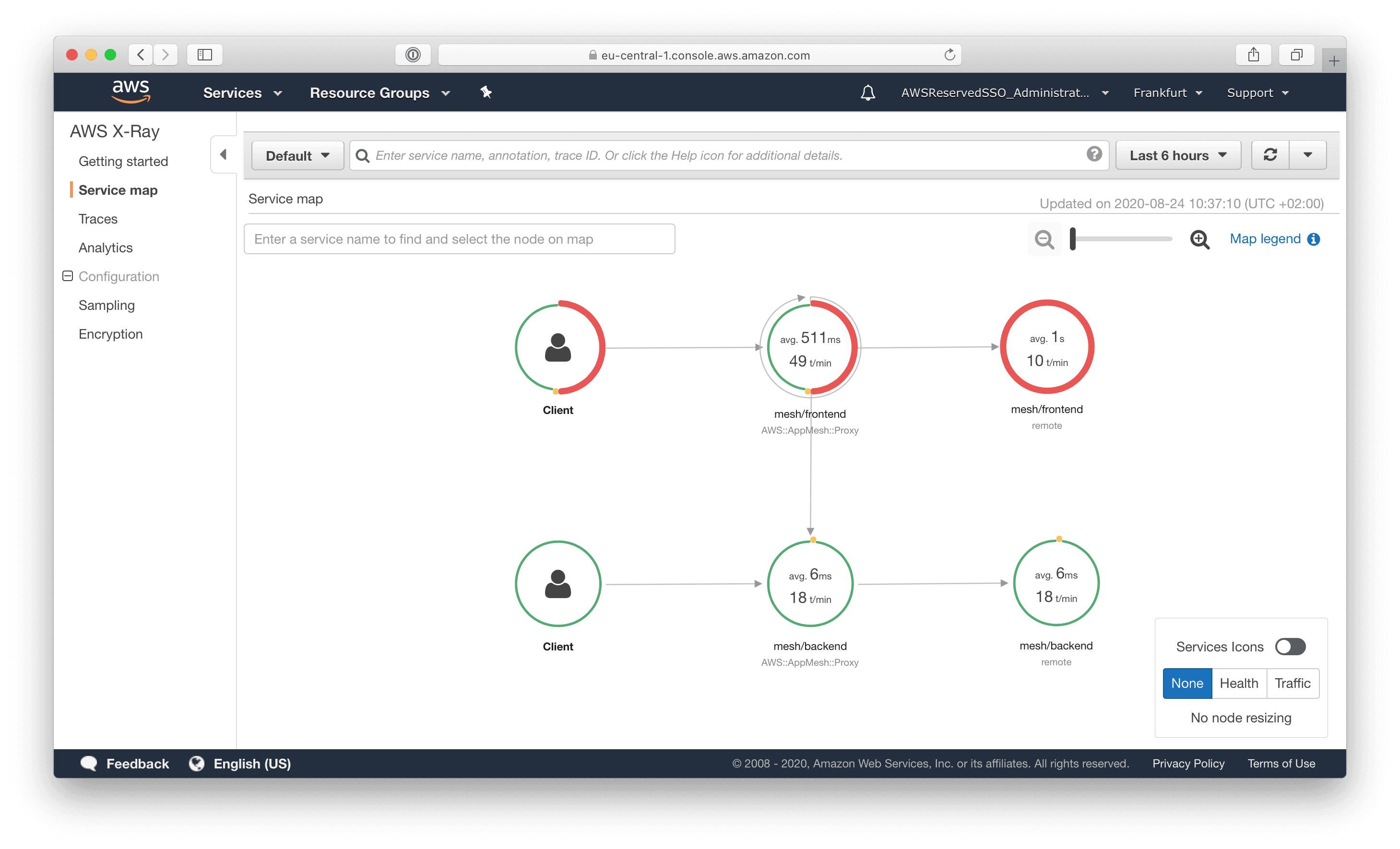

Observability

App Mesh promises end-to-end visibility2 for microservices. Therefore, App Mesh provides metrics, logs, and traces. By default, App Mesh integrates with CloudWatch and X-Ray.

- CloudWatch Logs to collect and analyze incoming and outgoing requests or connections.

- CloudWatch Metrics to gain insights into incoming and outgoing requests or connections.

- X-Ray to record and analyze traces.

It is worth mentioning that App Mesh does not provide any observability insights by default. You have to deploy additional sidecar containers: a CloudWatch Agent to send metrics to CloudWatch and the X-Ray daemon to record traces.

The integration with X-Ray looks fine. After deploying the sidecar container and enabling tracing, data was showing up quickly.

After enabling logging for the App Mesh nodes, the request logs were shipped to CloudWatch Logs. Nothing noteworthy on that.

After activating CloudWatch metrics, I experienced an unpleasant surprise. My App Mesh example consists of two services (frontend and backend). However, enabling CloudWatch metrics for Envoy resulted in more than 500 CloudWatch metrics. Because App Mesh uses so-called custom metrics, that’s quite expensive. A single custom metric is $0.30 per month. So I have to pay more than $150 per month for the CloudWatch metrics. It also comes to the fact that I could not find any way to reduce the number of metrics send to CloudWatch. So the whole feature is useless, in my opinion.

Using Prometheus is a possible workaround. However, I’m not a big fan of operating infrastructure that does not provide business value on my own.

Encrypt Data-In-Transit

App Mesh integrates with the AWS Certificate Manager (ACM) to provide certificates to establish trust for the encrypted connections within the mesh. With App Mesh will automatically rotate and update certificates issued by the ACM. Great stuff!

But there is also a catch. We are all used to the fact that certificates are free, for the use with an ALB or NLB. However, App Mesh uses a Private CA provided by the AWS Certificate Manager. A Private CA is $400 per month. Probably, out of budget for most scenarios.

The only workaround is to provide and deploy a certificate on your own. Not an option, in my opinion.

Deployment Orchestration

Within a service mesh, it is possible to control traffic between services in a granular way. That enables exciting opportunities during the deployment of a service. For example, you could send only a small portion of traffic to the latest version of a service to test if everything works fine in production. After a while, you could then switch all traffic to the newest version. This process is known as canary deployments.

App Mesh enables you to do canary and all other kinds of advanced deployment techniques. However, you need another tool for that. None of the AWS services are capable of orchestrating a deployment leveraging the features provided by App Mesh. We are missing integrations between App Mesh and CodePipeline, CodeDeploy, as well as CloudFormation.

You are lucky when using EKS because Flagger - a progressive delivery tool implementing canary releases, A/B testing, blue/green mirroring, and more - supports App Mesh.

Missing Features

Some essential features are missing.

- Service-to-Service authentication and authorization is not supported yet.

- Rate limiting to protect services from traffic spikes is not available yet.

- Currently, there is no way to configure the options for the circuit breaker.

Pricing

Using App Mesh is free of charge. That’s great! You are paying for the underlying infrastructure - EC2, Fargate, EKS, and so on - only. On the one hand, free service is good news. On the other hand, I’m always skeptical about whether free services find enough support within Amazon to drive further development.

App Mesh vs. ELB

The concept of a service mesh is en vogue. Without a doubt, a service mesh is a promising approach. However, there is also a reliable alternative: good old load balancers. Therefore, I want to compare App Mesh with Elastic Load Balancing (ELB). Probably the most crucial difference is that App Mesh is reducing the number of network hops.

Let’s have a look at the differences in detail. I’m comparing App Mesh, ALB (Application Load Balancer), and NLB (Network Load Balancer) here.

| App Mesh | ALB | NLB | |

|---|---|---|---|

| Obeservability | ✅ inbound+outbound requests, requires additional sidecar containers | ⚠️ inbound requests only | ❌ very little insights |

| High Availablity | ✅ health checks, cross-zone routing, ... | ✅ health checks, cross-zone routing, ... | ✅ health checks, cross-zone routing, ... |

| Fault Tolerance | ✅ retries, circuit Breaker, ... | ⚠️ client-side code needed | ⚠️ client-side code needed |

| Intercompatibility | ✅ EC2, ECS, EKS, Fargate | ✅ EC2, ECS, EKS, Fargate, On-Premises | ✅ EC2, ECS, EKS, On-Premises |

| Latency | ✅ 0 additional network hops, 2 additional request processings | ⚠️ 1 additional network hops, 1 additional request processings | ✅ 0 additional network hops, 0 additional request processings |

| Resource Efficiency | ⚠️ 1-3 sidecar containers per task/pod | ✅ | ✅ |

| Fully Managed | ⚠️ only parts of the service | ✅ | ✅ |

| Costs | ⚠️ free of charge, but costs for CPU and memory of sidecar containers | ⚠️ hourly fee, traffic, ... | ⚠️ hourly fee, traffic, ... |

| Complexity | ⚠️ additional layer of abstraction | ✅ simple | ✅ simple |

In summary, App Mesh comes with a few advantages compared to an ALB or NLB. However, adding X-Ray or any other application monitoring solution to each service provides deep insights into the microservice architecture. Also, there are libraries for every programming language to retrofit retry strategies and circuit breakers.

In my opinion, the additional layer of abstraction and complexity introduced by App Mesh is not worth the advantages in most scenarios.

Service Maturity Table

The following table summarizes the maturity of the service:

| Criteria | Support | Score |

|---|---|---|

| Feature Completeness | ✅ | 4 |

| Documentation Detailedness | ✅ | 4 |

| Tags (Grouping + Billing) | ✅ | 10 |

| CloudFormation + Terraform support | ✅ | 9 |

| Emits CloudWatch Events | ❌3 | 0 |

| IAM granularity | ✅ | 9 |

| Integrated with AWS Config | ❌4 | 0 |

| Auditing via AWS CloudTrail | ✅5 | 8 |

| Available in all commercial regions | ⚠️ | 8 |

| SLA | ❌ | 0 |

| Compliance (ISO, SOC HIPAA) | ❌67 | 2 |

| Total Maturity Score (0-10) | ⚠️ | 4.9 |

Our maturity score for AWS App Mesh is 4.7 on a scale from 0 to 10.

Summary

Building a service mesh is trending those days. App Mesh provides service mesh capabilities for EC2, ECS, and EKS. For free! On top of that, App Mesh integrates with a bunch of AWS services like Cloud Map, Certificate Manager, CloudWatch, and X-Ray. App Mesh is a new service still at the very beginning. Our service maturity score of 4.7 indicates that it is too early to use App Mesh right now. Let’s wait for AWS to improve the service step by step based on other AWS customers’ feedback.

The fundamental problem is that App Mesh is not a fully managed service. As an App Mesh customer, you need to deploy and operate 1-3 sidecar containers per task (aka. pod). This contradicts the goal of having the cloud provider take over as many tasks as possible.

It is frustrating that activating CloudWatch metrics incurs costs of more than $150 per month for a mesh consisting of two services. Also, $400 per month for a private CA provided by ACM will probably be a show stopper for most scenarios.

Overall, App Mesh is only for service mesh enthusiasts.

1. https://www.envoyproxy.io/docs/envoy/latest/intro/what_is_envoy ↩

2. https://aws.amazon.com/app-mesh/ ↩

3. https://docs.aws.amazon.com/AmazonCloudWatch/latest/events/EventTypes.html ↩

4. https://docs.aws.amazon.com/config/latest/developerguide/resource-config-reference.html ↩

5. https://docs.aws.amazon.com/app-mesh/latest/userguide/logging-using-cloudtrail.html ↩

6. https://aws.amazon.com/compliance/services-in-scope/ ↩

7. https://aws.amazon.com/about-aws/whats-new/2020/07/aws-app-mesh-achieves-hipaa-eligibility/ ↩