Messaging on AWS

Previously, I compared all database options offered by AWS for you. In this post, I compare the available messaging options. The goal of messaging on AWS is to decouple the producers of messages from consumers.

The messaging pattern allows us to process the messages asynchronously. This has several advantages. You can roll out a new version of consumers of messages while the producers can continue to send new messages at full speed. You can also scale the consumers independently from the producers. You get a buffer in your system that can absorb spikes without overloading it.

Do you prefer listening to a podcast episode over reading a blog post? Here you go!

In this blog post, I introduce all the messaging options that AWS offers. Afterward, I end with a comparison table of the options.

This is a cross-post from the Cloudcraft blog.

Amazon SQS Standard

Amazon Simple Queue Service (SQS) is a fully managed service. There is zero operational overhead, and you pay per message.

SQS comes in two flavors: Standard queues and FIFO queues. I’ll focus on standard queues in this section. The next section covers FIFO queues.

SQS standard queues are the most convenient option you can dream of. You can send nearly unlimited messages and read them at any rate you wish. A message is stored in the queue for up to 14 days. Once you read and delete the message from the queue, it will disappear. Usually, one message is received by one consumer only.

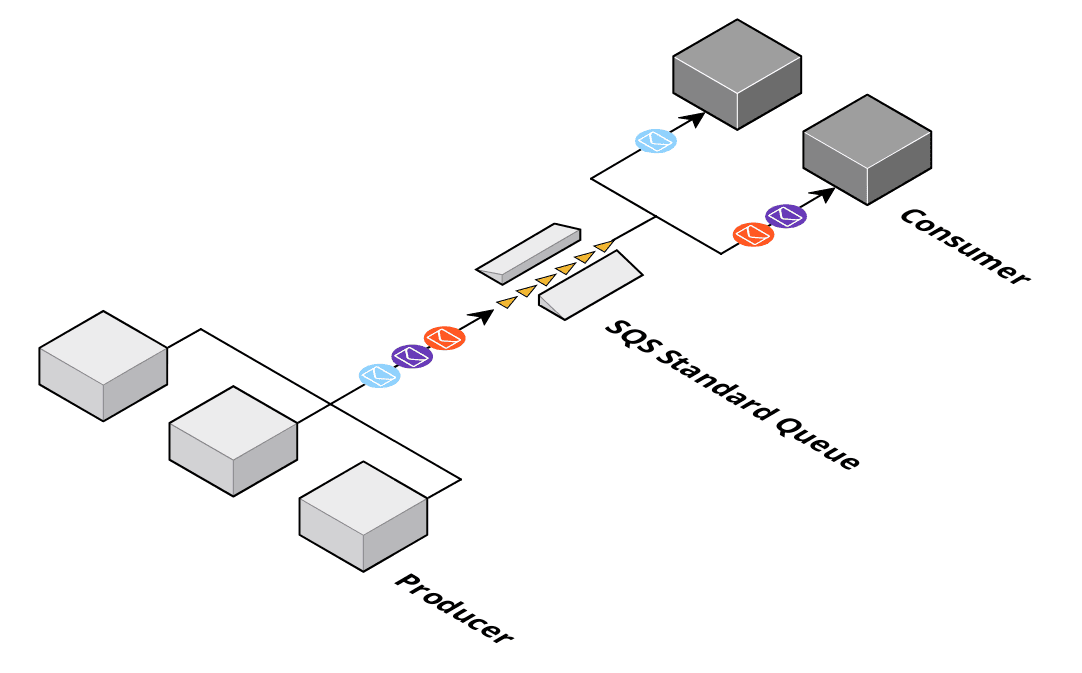

The following figure shows a typical SQS scenario. I’ve created the cloud diagram with Cloudcraft.

Two limitations might surprise you. Firstly, SQS does not preserve the order of messages. It tries to, but there are no guarantees about message ordering. There is nothing that you can do to fix it so, you rely on order, SQS standard queues may not be for you.

Limitation number two: A message may be delivered more than once (at least once delivery). There are multiple reasons for that. A producer wants to send a message but receives an error (e.g., a network timeout). There is no way for the sender to know if the message was delivered or not. If the message is sent again to retry, the message could be created twice.

Consumers can also fail. If you read a message from SQS, the consumer has a certain amount of time to delete the message from the queue to acknowledge that it was processed successfully. If that acknowledgment comes too late, or not at all, because of a network error, the message will become available for a consumer again.

Last but not least, the SQS service itself can also be the cause of unwanted redelivery of a message. The best way to deal with the at least once semantics is an idempotent consumer.

Amazon SQS FIFO

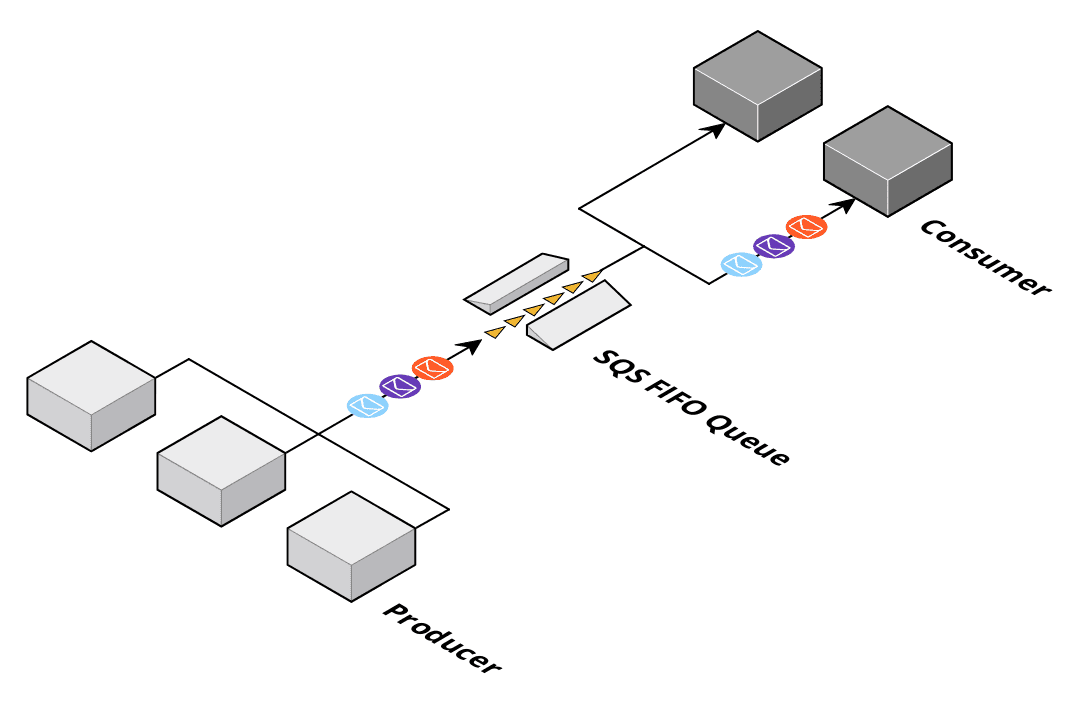

As mentioned in the previous section, FIFO queues guarantee order. They also provide a way to ensure that a message is not created twice if a consumer retries the sending. The downside is: FIFO queues can only handle 300 messages per second or 3000 messages if you send messages in batches of ten.

To consume messages in order, you have to use a single consumer. If you only need an order within a subset of messages, you can define so-called groups of messages where the order is only guaranteed within a group (e.g., the customer id could form a group).

Pro tip: Only use FIFO queues if you can ensure that no more than 300 messages are produced per second, even during rare traffic spikes.

Amazon SNS Standard

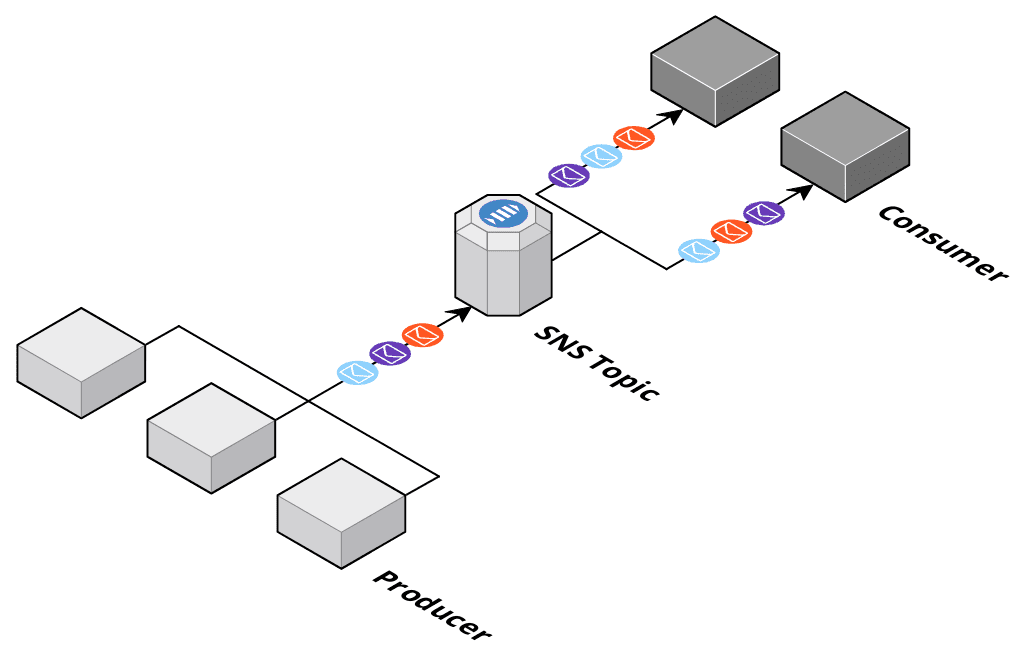

Amazon SNS is a fully managed, publish/subscribe system. You send a message to a topic, and all subscribers to that topic will receive a copy of the message. SNS is similar to SQS: zero operational effort but no order guarantee and at least once delivery of messages.

SNS can be used to implement message fanout. Keep in mind that if a topic has zero subscribers, the message is sent into a black hole and disappears.

SNS uses soft limits to throttle message producers. The default limits depend on the region. For example, in eu-west-1, the default limit is 9000 msg/sec. The hard limit is not disclosed.

Amazon SNS FIFO

An SNS topic with guarantee order. SNS FIFO also provides a way to ensure that a message is not created twice if a producer retries in case of failures. Therefore, exactly once delivery is possible.

The downsides are:

- FIFO topics can only handle 300 messages per second or 3000 messages if you send messages in batches of ten but no more than 10 MB per second.

- The only possible subscriber type for a FIFO topic is an SQS FIFO queue.

Amazon EventBridge

Amazon EventBridge (formerly CloudWatch Events) is a fully managed, publish/subscribe system. The publisher sends an event to an event bus. If you want to subscribe to events, you create a rule in an event bus. If the published event matches with a rule, the event is routed to up to five targets. More than 15 target types are supported (including SQS, SNS, Lambda). EventBridge guarantees are similar to SNS and SQS: zero operational effort but no order guarantee and at least once delivery of messages.

EventBridge can be used to implement message fanout. Keep in mind that if an event does not match with a rule, it disappears unnoticed. You can optionally archive all events delivered to an event bus. Archived events can be replayed at any time.

EventBridge uses soft limits to throttle message producers. The default limits depend on the region. For example, in eu-west-1, the default limit is 10,000 msg/sec. The hard limit is not disclosed.

Amazon Kinesis Data Streams

Amazon Kinesis provides capabilities related to real-time data. I’ll focus primarily on data streams in this section.

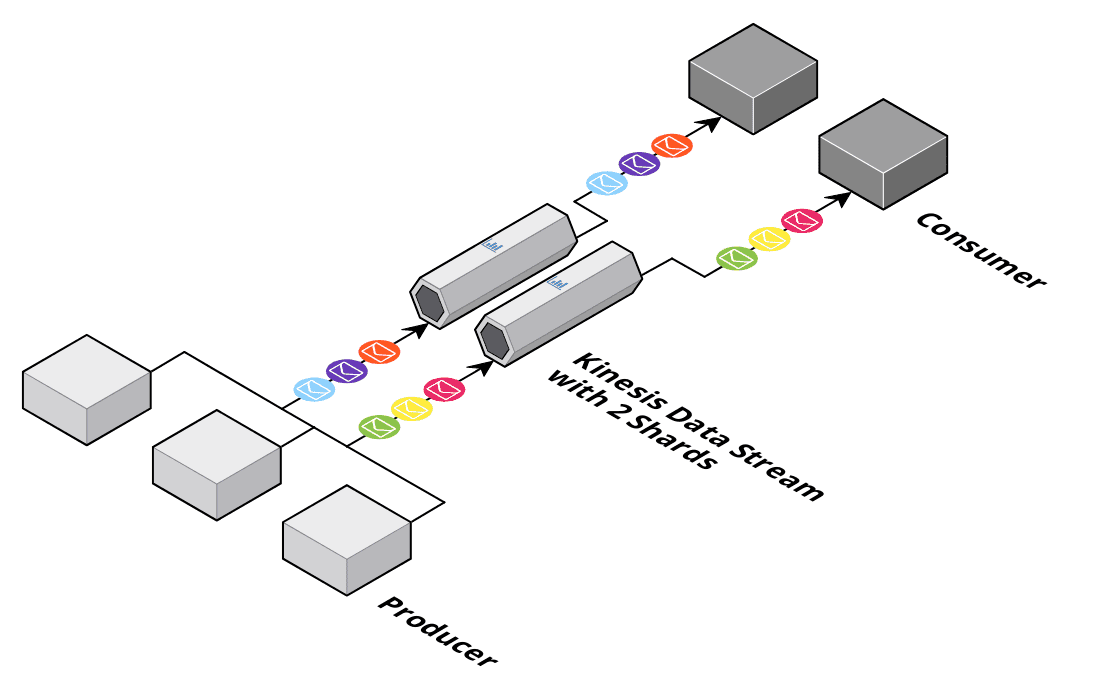

If you send a message to a stream, it is appended to the end of the stream (similar to a queue). The difference comes when you read the messages. A message does not disappear from the stream when you read it. Instead, the consumer reads through a stream and keeps track of its position in the stream. You can, at any time, start reading from the beginning of the stream. The only limitation: Kinesis drops the data when it gets older than 365 days. Kinesis guarantees to keep messages in order (FIFO). However, message ordering is only consistent within a shard. A shard is capable of handling up to 1 MB/s or 1000 messages/sec. You have to add/remove shards as needed and you pay for shards per hour.

There is no way for a producer to avoid the resend problem mentioned in the SQS standard queue section. If a producer has to retry sending a message, it could end up twice in the stream. Keep in mind that the consumer has to keep track of the position in the stream while reading, which can also lead to reading a message twice. I use Kinesis data streams in scenarios where SQS standard queues are not an option because I have to rely on some order within a subset of my data (e.g., customer id mapped to shards).

Amazon MSK

Amazon Managed Streaming for Apache Kafka (MSK) offers Apache Kafka as a Service. You get a managed cluster and can start working with Kafka without the operation complexity. Kafka works in a similar way than Kinesis data streams.

The main benefits:

- Kafka is open source, and you can use it outside of AWS.

- Kafka topics can store your data forever if you wish.

- Kafka can scale horizontally by adding brokers. Topics are divided into partitions, and you will find order only within a partition (the same as with Kinesis shards).

Amazon MQ

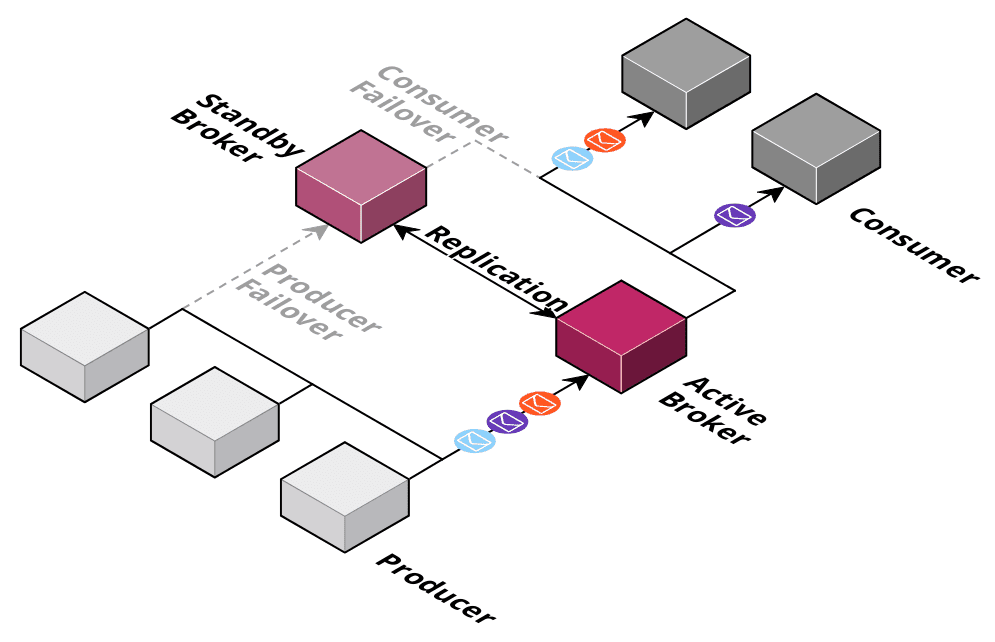

Amazon MQ offers Apache ActiveMQ as a Service. MQ is somehow similar to how RDS deploys databases. Two instances are running in two availability zones. One of them is active and used by producers and consumers while all data is replicated to a standby broker. In the case where the active broker fails, the producers and consumers will reconnect to the standby broker.

The problem with this architecture is obvious: The throughput of the system is limited to what a single broker (and storage) can provide. In this case, the storage layer is backed by Amazon EFS, which limits the throughput to 80 messages per second. You can choose other storage layers, but you will risk losing messages. Luckily, you can operate ActiveMQ in a network of brokers mode to increase the throughput. The most significant benefit is that ActiveMQ supports a wide range of protocols such as JMS, AMQP, MQTT, STOMP, OpenWire.

AWS IoT Core

AWS IoT Core is mostly used for IoT workloads, where a scalable MQTT broker is the foundation of the architecture. The order of messages is not guaranteed. MQTT QoS levels at most once and at least once are supported. The nice benefit of IoT Core is that you can not only access an MQTT broker, you can also define rules inside the system to react upon messages. E.g., you can trigger a Lambda function if a message is published on a topic.

Summary

There are many options with a broad range of capabilities. I compiled the following table to help you make your selection.

| Amazon SQS Standard | Amazon SQS FIFO | Amazon SNS Standard | Amazon SNS FIFO | Amazon EventBridge (formerly CloudWatch Events) | Amazon Kinesis Data Streams | Amazon MSK | Amazon MQ | AWS IoT Core | |

|---|---|---|---|---|---|---|---|---|---|

| Scaling | nearly unlimited | 3000 msg/sec (batch write) | not disclosed (default soft limit depends on region; e.g., 9000 msg/sec in eu-west-1) | 3000 msg/sec (batch write) or 10 MB per second | not disclosed (default soft limit depends on region; e.g., 10,000 msg/sec in eu-west-1) | 1 MB or 1000 msg/sec per shard; up to 500 shards; you need to manually add/remove shards | 30 brokers per cluster; you need add/remove brokers and reassign partitions manually | 80 msg/sec; can be increased with a network of brokers | not disclosed |

| Max. message size | 256 KB | 256 KB | 256 KB | 256 KB | 256 KB | 1 MB | configurable (default 1 MB) | limited by disk space | 128 KB |

| Persistence | up to 14 days | up to 14 days | no | no | archiving is possible | up to 365 days | forever (up to 16384 GiB per broker) | forever (up to 200 GB) | up to 1 hour |

| Replication | Multi-AZ | Multi-AZ | Multi-AZ | Multi-AZ | Multi-AZ | Multi-AZ | Multi-AZ (optional) | Multi-AZ (optional) | Multi-AZ |

| Order guarantee | no | yes | no | yes | no | within a shard | within a partition | yes | no |

| Delivery guarantee | at least once | at least once | at least once | at least once | exactly once; supports distributed (XA) transactions | at least once / at most once | |||

| Pricing | per message | per message | per message | per message | per message | per shard hour | per broker hour + provisioned storage | per broker hour + used storage | per message + connection duration |

| Protocols | AWS Rest API | AWS Rest API | AWS Rest API | AWS Rest API | AWS Rest API | AWS Rest API | JMS, AMQP, MQTT, STOMP, OpenWire | MQTT, AWS Rest API | |

| AWS Integrations | Lambda | Lambda | Lambda, SQS, webhook | SQS FIFO | Lambda, SQS, SNS, and many more | Lambda | Lambda | Lambda | Lambda, SQS, SNS, and many more |

| License | AWS only | AWS only | AWS only | AWS only | AWS only | AWS only | open source (Apache Kafka) | open source (Apache ActiveMQ) | AWS only |

| Encryption at rest | yes | yes | yes | yes | yes | yes | yes | yes | no |

| Encryption in transit | yes | yes | yes | yes | yes | yes | yes | yes | yes |

Further reading

- Article Databases on AWS

- Article AWS Account Structure: Think twice before using AWS Organizations

- Article EC2 Instances 2.0 - Time to Update Your Toolbox

- Tag sns

- Tag sqs

- Tag iot