Sharing data volumes between machines: EFS

Many legacy applications store state in files on disk. Therefore, using Amazon S3, an object store, is impossible by default. Using block storage might be an option, but it won’t allow access to files from multiple machines in parallel. Hence you need a way to share the files between virtual machines. With Elastic File System (EFS), you can share data between multiple EC2 instances and your data is replicated between multiple Availability Zones (AZ). EFS is based on the NFSv4.1 protocol, which allows you to mount it like any other file system. In this article you’ll learn about the basics of EFS.

Note: EFS only works with Linux. At this time, EFS isn’t supported by Windows EC2 instances.

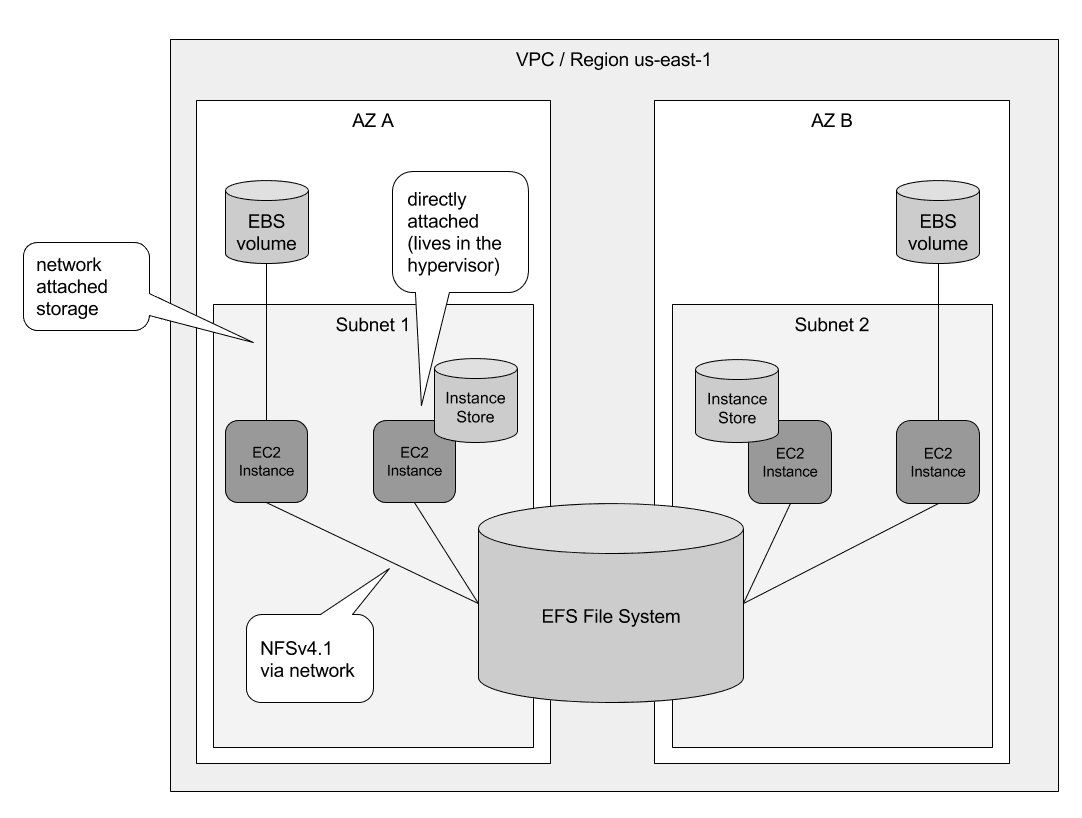

Let’s have a closer look at how EFS works compared to Elastic Block Store (EBS) and Instance Store. An EBS volume is tied to a data center, also called Availability Zone (AZ), and can only be attached over the network to a single EC2 Instance from the same data center. Usually EBS volume are used as the root volumes, which contain the operating system, or for relational database systems to store the state. An Instance Store consists of a hard drive directly attached to the hardware which the virtual machine is running on. Instance Store can be regarded ephemeral storage and is used for caching or for NoSQL database with embedded data replication only. In contrast, the EFS file system can be used by multiple EC2 instances from different data centers in parallel. Additionally, the data of the EFS file system is replicated among multiple data centers and remains available even if a whole data center suffers from an outage, which isn’t true for EBS and Instance Store. The following figure shows the differences.

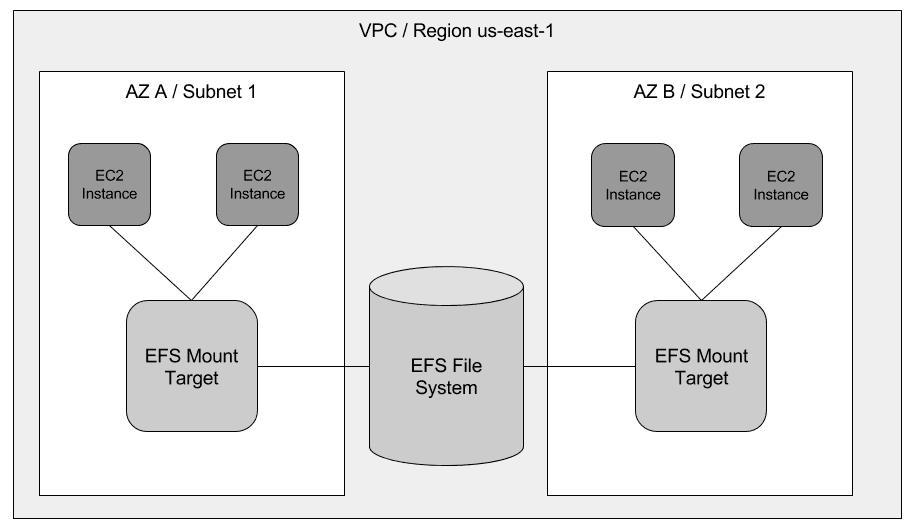

Now that you know about the differences, it’s time to have a closer look at EFS. Two main components require your attention:

- EFS File System: Stores your data

- EFS Mount Target: Makes your data accessible

This article, excerpted from chapter 10 of Amazon Web Services in Action, Second Edition.

Save 37% off [Amazon Web Services in Action, Second Edition](http://bit.ly/amazon-web-services-in-action-2nd-edition) with code `fccwittig` at [manning.com](http://bit.ly/amazon-web-services-in-action-2nd-edition).

The EFS File System is the resource that stores your data in an AWS region. But you can’t access the file system directly. To access your file system, you must create an EFS Mount Target in a subnet. The mount target provides a network endpoint that you can use to mount the file system via NFSv4.1. With the mount target endpoint, you can finally mount the EFS File System on an EC2 Instance. The EC2 Instance must be in the same subnet as the EFS Mount Target, but you can create mount targets in multiple subnets. The following figure demonstrates how to access an EFS File System from EC2 instances running in multiple subnets.

Equipped with the EFS theory about file systems and mount targets, you can now apply your knowledge to solve a real problem. The Linux operating system is a multiuser system. Many users can store data and run programs isolated from each other. Each Linux user can have a home directory which is usually stored under /home/$username. If the user name is michael, the home directory would be /home/michael. And only the michael user would be allowed to read and write in /home/michael. The ls -d -l /home/* command list all home directories.

$ ls -d -l /home/* (1) |

If you’re using multiple EC2 instances, your users will have a separate home folder on each EC2 instance. If a Linux user uploads a file on one EC2 instance, she can’t access the file on another EC2 instance. To solve this problem, you’ll create an EFS File System and mount the EFS File System on each EC2 Instance under `/home. The home directories are then shared across all your EC2 instances and users feel at home when they login no matter on which virtual machine.

That’s all for this article. If you want to know more, check out the 10th chapter of Amazon Web Services in Action, Second Edition.