AWS Security Primer

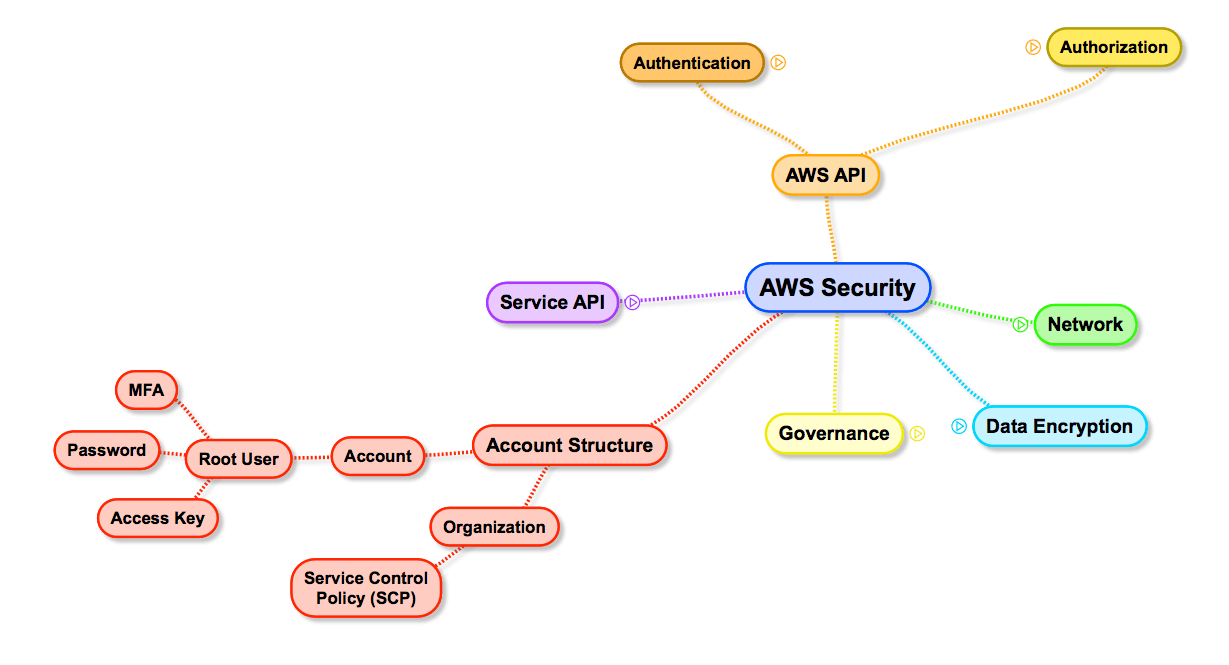

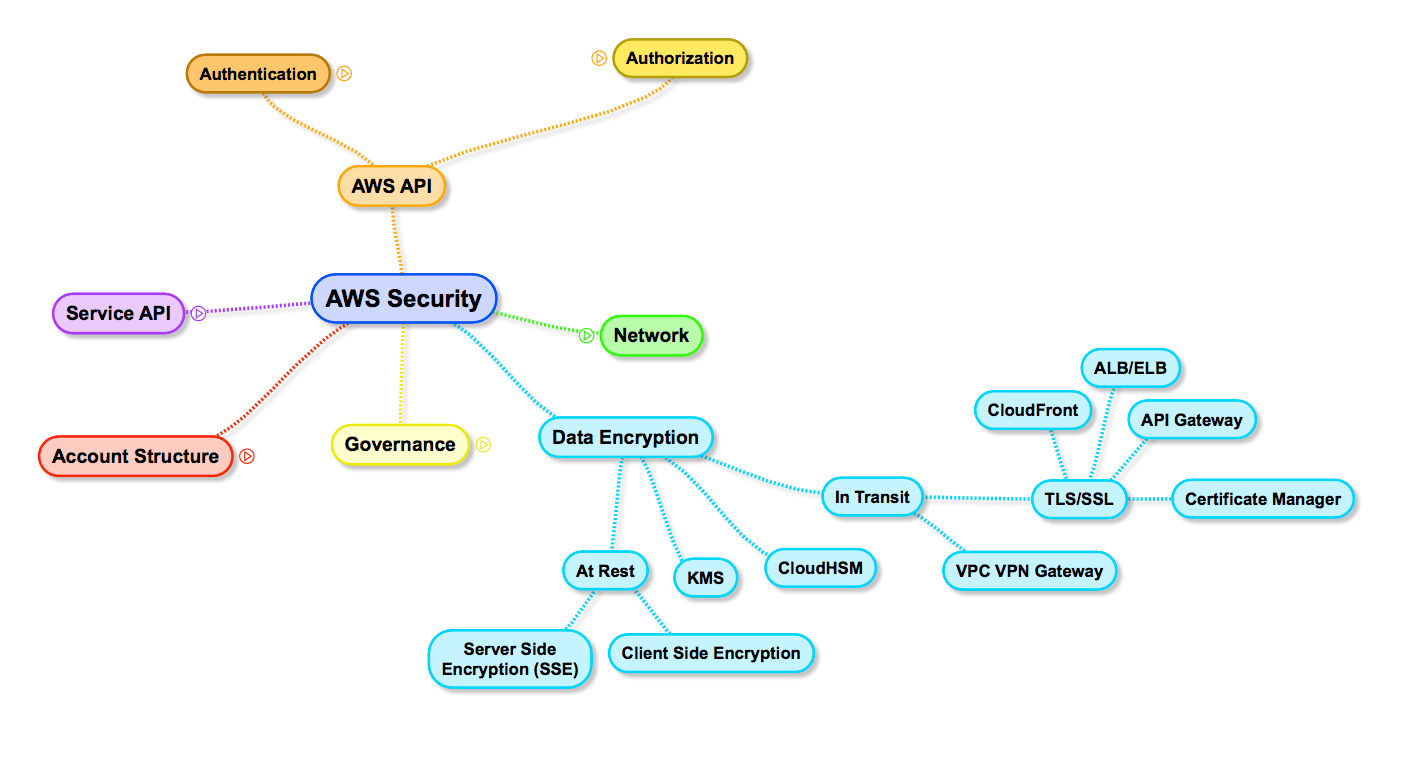

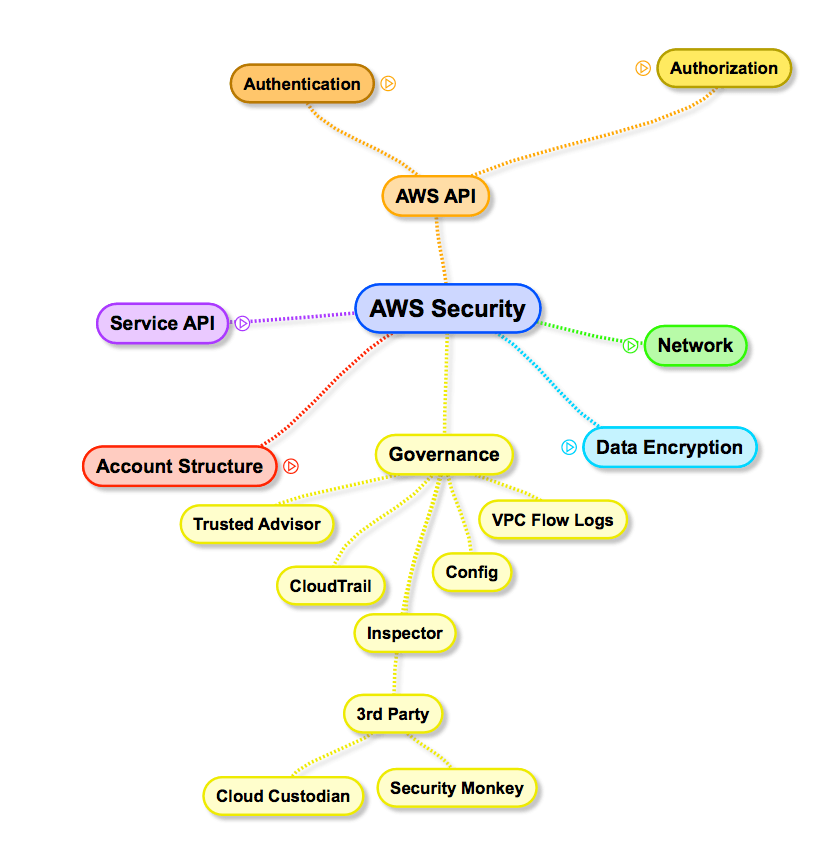

I was preparing some AWS Security related training. Soon, I realized that this topic is too huge to fit into my brain. So I structured my thoughts in a mind map1. Within a couple of minutes2 I came up with this:

What is your first reaction? Mine was pretty much surprised:

Let me summarize how AWS Security works to make sure you are not surprised one day.

This post received over 200 points on Hacker News.

Account Structure

In 2015, I wrote a blog post about why Your single AWS Account is a serious risk. Since then, AWS released Organizations. An Organization is a bucket for multiple AWS Accounts. The key thing to consider is Service Control Policies (SCPs). SCPs are evaluated before IAM policies. So you can restrict usage of services within an AWS account and no IAM policy in the world can change that.

The security best practices for root user rules still apply:

- Don’t use your root user

- Activate MFA

- Don’t use the Access Key

AWS API

I needed to separate this topic into two: Authentication (who you are) and Authorization (what you are allowed to do).

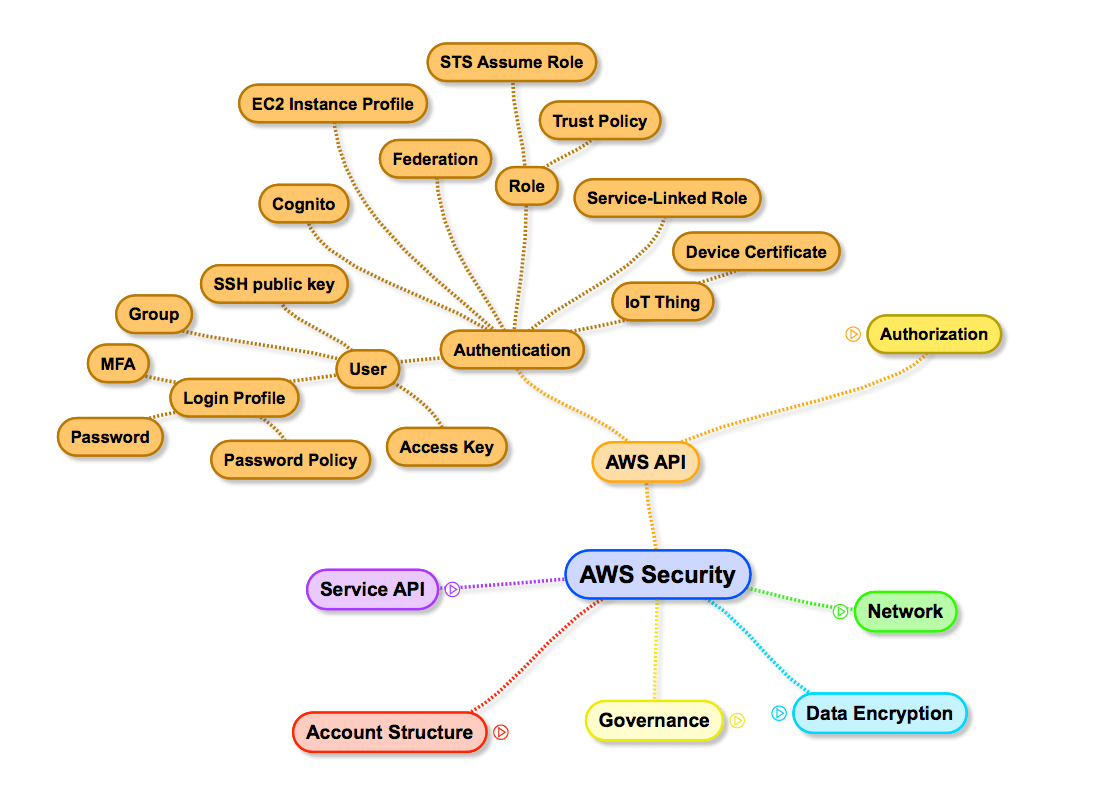

Authentication

The thing you are familiar with is the IAM User. If you don’t use the root user (which you should not), you are most likely using AWS with IAM Users. An IAM User can (this is optional!) login to the Management Console using a Login Profile. As you know, the password should not be as trivial as your birthday, which you can enforce by a password policy. It’s also regarded good practice to activate MFA for all IAM Users with a Login Profile. If you want to use the AWS CLI you also need an Access Key which you download to your laptop. This is very sensitive information, and it’s a good practice to rotate this Access Key in regular intervals. If you are using AWS CodeCommit (managed git repo) than you also can upload your public SSH key for authentication purposes. A lot of stuff, still we have not talked about what the user is allowed to do. This will be covered in the next section.

But let’s first look at other points on the map.

- An IAM Group is not an identity (you can not reference the group later) it just groups IAM Users. And you can manage Authorization for the whole group instead of individual users, which is also a good practice.

- Cognito is niche. You can manage your own pools of users (not IAM Users) or connect with Facebook, Twitter, … and all those users can (depends on what you authorize) get access to parts (or the whole) of your AWS account.

- An EC2 Instance Profile is used to authenticate an EC2 Instance. This is handy if your EC2 instances want to communicate with the AWS API. No need for Access Keys on your EC2 instances.

- Federation can make your life easier if you have many users that want to use AWS in your company. You can use an external Identity Provider such as your AD to authenticate users.

- An IAM Role can only be assumed. You can not login. The cool thing is that not only IAM Users can assume roles. Also, AWS services can assume roles for you to work on your behalf. Even roles can assume roles which are amazing if you plan to complicate your setup. Important to keep in mind is, that a role controls who can assume her in a Trust Policy.

- To make things simpler, AWS introduced Service-Linked Roles. Some AWS services manage parts of your AWS account on your behalf. E.g. EMR launches EC2 instances to run Hadoop for you. Before Service-Linked Roles, you had to create an IAM Role with the proper Trust Policy for AWS to work in your account. Service-Linked Roles come pre-configured and are managed by AWS. You only have to install them once.

- Also kind of niche is IoT Things. Things like your thermostat authenticate with a certificate. A thing can (depends on what you authorize) get access to parts (or the whole) of your AWS account.

This is a summary of who can get access to your AWS account.

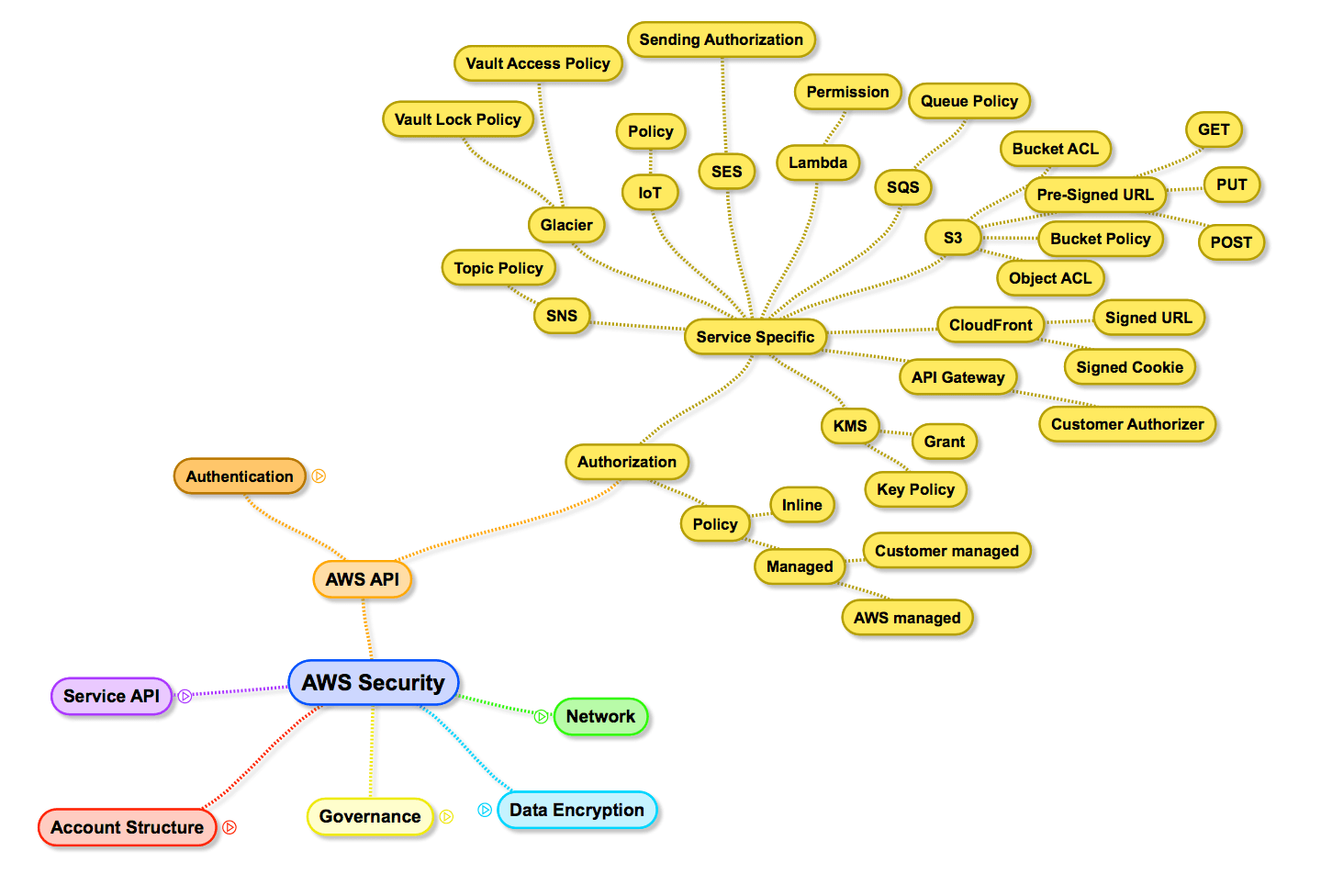

Authorization

Given you, or a thing, or a machine, … authenticated successfully, they now want to do something. That’s where IAM Policies come into play. A policy can be defined inline (IAM User, IAM Group, IAM Role) or can be a separate entity (Managed Policy) that can be attached to IAM Users, IAM Groups, or IAM Roles. You can either create your own Managed Policies or use the predefined policies managed by AWS. You will most likely not follow the principle of least privileges if you use AWS managed policies. The whole policy topic seems easy to understand. The only problem is to define those policies.

Besides IAM Policies, some services use additional authorization mechanism. The services with their own authorization mechanism are:

- SNS and SQS: A Topic Policy or Queue Policy can open a topic/queue to things like IAM User, IAM Role, AWS Account, or just all of us. This can be handy, but you should think twice before doing so. Most likely, you can use the assume role approach to achieve the same!

- Glacier: Glacier comes with two types of policies, one to control access, and the other to control modification of archives. The latter is important if you have to enforce that data can not be changed for legal reasons.

- IoT: As mentioned, IoT Things can authenticate, and the Policy determines what they can do in your AWS account.

- Lambda: A Lambda Function can be opened for invocation by a Permission. This is needed if you want a “Cron” to trigger your Lambda because this “Cron” is AWS. So AWS needs permissions to invoke your function. Most likely, you can use the assume role approach to achieve the same!

- S3: S3 is the king of alternative ways for authorization.

- You can set ACLs on the bucket and object level to give read/write permissions. I recommend no using them. There is no simple way to change all ACLs on the object level. And it is very hard to reason if every object can have different permissions.

- You can also use a Bucket Policy to give read/write permissions to your bucket/objects. This makes sense if your bucket is public and you want to give read access to the world.

- You can also generate pre-signed URLs to give temporary permissions to read or upload objects. This makes sense if you want users to directly upload files from their browser.

- KMS: Key Policies are the primary way to control access to customer master keys (CMKs) in KMS. On top of that, you can use IAM policies to authorize. The second way to control access are Grants. With a Grant, you can allow another AWS principal (e.g. an AWS account) to use a CMK with some restrictions. You could also implement this with the Key policy, but grants are more flexible to control.

Service API

Some services come with their own API. Databases, like MySQL, come with their own authentication and authorization mechanism. You usually authenticate with username and password and the database user is authorized to do specific things (e.g. not DROP TABLE). Keep in mind that the AWS API to manage RDS still uses the auth mechanism of the AWS API.

If you want to administer your Linux or Windows virtual machine you will use SSH or RDP. For Linux machines, AWS can deploy up to one public SSH key to your instance. You can then login via SSH with the private key on your machine. For Windows machines, AWS encrypts the password with the public key, and you can use the private key to decrypt the password. Using the username and password, you can login via RDP.

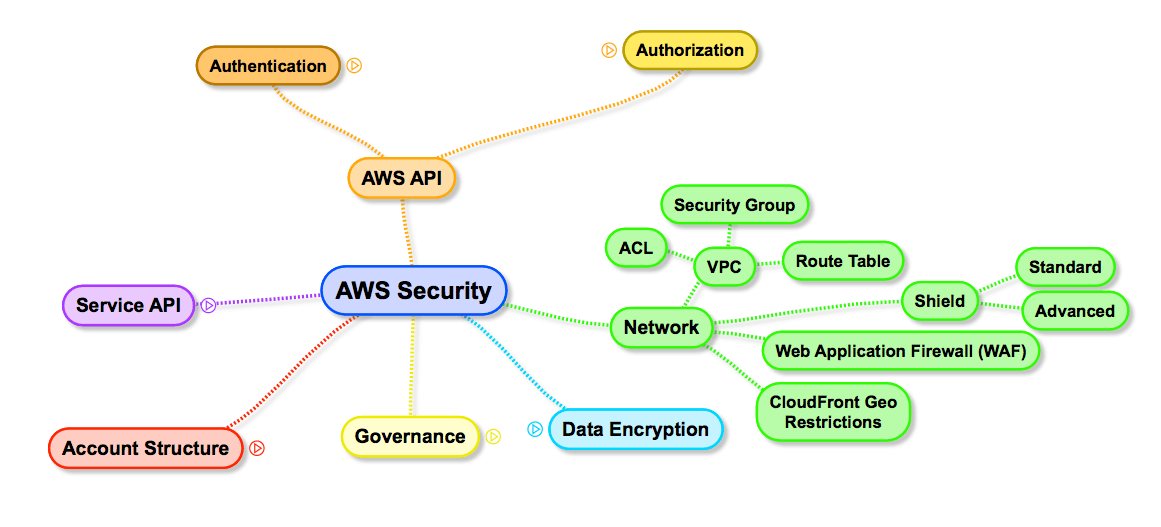

Network

The network is where many AWS customers spend most of their time when thinking about security. But as you can see, it is much easier to understand than AWS API access. You create a VPC (no EC2-classic coverage here!) where you define your subnets. Subnets within a VPC can talk to each other (they are routed).

The recommended way of controlling network access are Security Groups. A Security Group is attached to ENIs (network interfaces) and controls inbound and outbound traffic. By default, Security Groups do not allow any inbound traffic but allow all outbound traffic. Besides identifying traffic by IP address ranges you can also identify traffic by the target/origin Security Groups. Security Groups are stateful: If an inbound packet is allowed, the response is also allowed and vice versa.

There are also ACLs to control inbound and outbound traffic for subnets. By default, ACLs are configured to allow all inbound and outbound traffic. ACLs are stateless, so you will most likely open all high ports. I recommend not to use ACLs unless you have a reason.

Finally, you can control who can talk to whom on the network by configuring routes. You may want to connect your office network with your VPC. You can use a VPN connection to do so. The routing then determines which Subnets can send packages to your office and vice versa.

Data Encryption

Mostly all services that store data (EBS, S3, SQS, …) support encryption at rest. Which means that AWS will encrypt the data on-disk. This is handy if you (or your regulator) fears that another AWS customer could get access to your data because of the shared nature of the environment. E.g. if you return an EBS volume to AWS because you no longer need it, the underlying hard disk will be reused. AWS wipes the data but there is a very tiny chance that someone with big resources can reconstruct parts of the data. If you don’t trust AWS, you should better encrypt the data before you send it to the service or not use AWS at all.

Spoiler: I’m not an expert on Data Encryption!

When data is encrypted/decrypted a key/secret is needed. Those keys are managed by the Key Management Service (KMS). The way this works is that KMS owns the customer master key (CMK) and issues data keys that are then used by e.g. EBS to encrypt/decrypt the data. The data key is stored together with the encrypted data, also encrypted. When EBS wants to decrypt the data gain (because you want to read it), it sends the encrypted data key to KMS, where the master key can decrypt the data key. The decrypted data key is returned to EBS where it is used to decrypt the data. Key is that the CMK never leaves KMS. If you delete the CMK, the encrypted data is garbage because it can no longer be decrypted.

If you don’t want that AWS owns/knows your master key you can use a magic box called Hardware Security Module to manage your master key. CloudHSM provides this boxes to you as a Service for ~$20,000 per year. The trick is that this magic hardware box audits access and destroys itself if someone wants to open it. The magic box also handles the encryption/decryption part on it’s own hardware.

To make things easier again, data that is in transit also wants to be encrypted. Especially if this data travels trough the Internet. All AWS services that can expose HTTP endpoints (ELB, ALB, CloudFront, API Gateway) also support TLS/SSL encryption with certificates managed by the Certificate Manager which renews certificates automatically at no costs.

If you want to connect your office with your VPC, you can use a VPN tunnel to encrypt the data.

The AWS API is also encrypting traffic using TSL/SSL.

The open question is: What happens if you talk to your database from an EC2 instance? Or if you talk to your Application Servers. You should at least know which traffic is (not) encrypted and why.

Governance

Documentation is king. You write down all your security related rules in Confluence. The first point in your list: Activate MFA. 1 year later, you recognize that still half of your IAM Users have not activated MFA. Maybe you need something better?

- AWS provides a service to record

all (okay, this was marketing speak)most of the API calls using CloudTrail. With this tool, you can exactly know who did what and when. You can also use Lambda to analyze this in near real time (5 minutes) and e.g. send an alert if a user deactivates MFA. - AWS Config creates snapshots of all your AWS configuration where you can define rules that must be met.

- Trusted Advisor checks if you follow best practices. Make sure that you receive those emails and review the findings every week!

- VPC Flow Logs can record network flows in your VPC. You could analyze them to: Improve Security (Groups) using VPC Flow Logs & AWS Config

Summary

This was a high level overview of the AWS Security surface. From my experience, customers spent most of their time securing their network because they know how to do this. I recommend that you shift your resources a little bit to make sure you get the AWS API Security right. With a single S3 Bucket Policy you can open your confident data to the world.

I missed a few points. Some of them are known:

- Patching the operating system (AMI)

- Patching the application’s dependencies

- Application internals (e.g. input validation)

But I’m pretty confident that I missed others. Contact me if you have feedback!

1. Mind map created with SimpleMind ↩

2. many people reviewed and improved the mind map (in no particular order): @flomotlik, @s0enke, and @sstatik, @LiatBenZur, @JaniKarh, @evannuil, @jobrandstetter, and @ngmarley. ↩